Changelog

Beginner Guide

我们来开启 WarpParse 的使用学习。

准备工作

安装

curl -sSf https://get.warpparse.ai/setup.sh | bash

MacOS 提示:

- 打开安装限制

下载学习示例

git clone https://github.com/wp-labs/wp-examples.git

目标1: 让WarpParse 运行起来

初始化项目

wproj init

mkdir ${HOME}/wp-space ;

cd ${HOME}/wp-space;

wproj init -m full

- 项目的结构

tree -L 2

.

├── conf

│ ├── wparse.toml

│ └── wpgen.toml

├── connectors

│ ├── sink.d

│ └── source.d

├── data

│ ├── in_dat

│ ├── logs

│ ├── out_dat

│ └── rescue

├── models

│ ├── knowledge

│ ├── oml

│ └── wpl

└── topology

├── sinks

└── sources

生成测试数据

第一个例子,是最为简单的从文件解析到文件

wpgen sample -n 3000

引擎解析数据

wparse batch --stat 2 -p

运行结果:

============================ total stat ==============================

+-------+------------+-----------------+---------+-------+---------+----------+--------+

| stage | name | target | collect | total | success | suc-rate | speed |

+======================================================================================+

| Parse | parse_stat | /nginx//example | | 3000 | 3000 | 100.0% | 3.12 |

|-------+------------+-----------------+---------+-------+---------+----------+--------|

| Pick | pick_stat | file_1 | | 3000 | 3000 | 100.0% | 150.00 |

|-------+------------+-----------------+---------+-------+---------+----------+--------|

| Sink | sink_stat | demo/json | | 1280 | 1280 | 100.0% | 3.56 |

+-------+------------+-----------------+---------+-------+---------+----------+--------+

数据统计

wproj data stat

- 输出

== Sources ==

| Key | Enabled | Lines | Path | Error |

|--------|---------|-------|-------------------------|-------|

| file_1 | Y | 3000 | .../data/in_dat/gen.dat | - |

Total enabled lines: 3000

== Sinks ==

| Scope | Sink | Path | Lines |

|----------|-------------|------------------------------|-------|

| business | demo/json | .../data/out_dat/demo.json | 3000 |

| infra | default/[0] | .../data/out_dat/default.dat | 0 |

| infra | error/[0] | .../data/out_dat/error.dat | 0 |

| infra | miss/[0] | .../data/out_dat/miss.dat | 0 |

| infra | monitor/[0] | .../data/out_dat/monitor.dat | 0 |

| infra | residue/[0] | .../data/out_dat/residue.dat | 0 |

只能基于文件才可以通过wproj统计

目标2: 解析自己的日志

学习 WPL 解析日志

样本: linux 系统日志

Oct 10 08:30:15 server systemd[1]: Started Apache HTTP Server.

纳入WP工程

mkdir ./models/wpl/my_sys

样本放置:

./models/wpl/my_sys/sample.dat

WPL放置:

./models/wpl/my_sys/parse.wpl

生成数据

批量解析

Docker

下载最新版本

docker pull ghcr.io/wp-labs/warp-parse:latest

sudo docker run -it --rm --user root --entrypoint /bin/bash <image-id>

getting_started

本用例用于“快速初始化 + 基准验证”。包含最小的源文件、基础的 sinks 组(default/miss/residue/intercept/error/monitor)以及示例业务路由(如有)。

目录结构

core/getting_started/

├── README.md # 本说明文档

├── run.sh # 一键运行脚本

├── conf/ # 配置文件目录

│ ├── wparse.toml # WarpParse 主配置

│ └── wpgen.toml # 数据生成器配置

├── models/ # 模型定义目录

│ ├── wpl/ # WPL(WarpParse Language)模型定义

│ ├── oml/ # OML(Object Mapping Language)模型定义

│ │ └── benchmark.oml # 基准测试规则

│ ├── sources/ # 数据源配置

│ │ └── wpsrc.toml # 数据源定义(文件/系统日志)

│ └── sinks/ # 数据汇配置

│ ├── defaults.toml # 默认配置

│ ├── infra.d/ # 基础设施 sinks

│ │ ├── default.toml # 默认数据汇

│ │ ├── miss.toml # 缺失数据处理

│ │ ├── residue.toml # 残留数据处理

│ │ ├── error.toml # 错误数据处理

│ │ └── monitor.toml # 监控数据处理

│ └── business.d/ # 业务 sinks

│ ├── business.toml # 业务数据处理

│ └── example/ # 示例业务处理

│ └── simple.toml # 简单示例

├── data/ # 数据目录

│ ├── in_dat/ # 输入数据目录

│ │ └── gen.dat # 生成的测试数据

│ ├── out_dat/ # 输出数据目录

│ │ ├── default.dat # 默认输出

│ │ ├── miss.dat # 缺失数据输出

│ │ ├── residue.dat # 残留数据输出

│ │ ├── error.dat # 错误数据输出

│ │ ├── monitor.dat # 监控数据输出

│ │ └── business.dat # 业务数据输出

│ ├── logs/ # 日志文件目录

│ │ ├── wparse.log # WarpParse 运行日志

│ │ └── wpgen.log # 数据生成器日志

│ └── rescue/ # 救援数据目录

│ └── *.rescue # 救援数据文件

├── .run/ # 运行时数据目录

│ ├── authority.sqlite # 权限数据库

│ └── rule_mapping.dat # 规则映射数据

├── sink.d/ # sinks 目录符号链接

└── source.d/ # sources 目录符号链接

快速开始

运行环境要求

- WarpParse 引擎(需在系统 PATH 中)

- Bash shell 环境

- 基础系统工具(awk, grep, wc 等)

运行命令

# 进入用例目录

cd usecase/core/getting_started

# 运行完整流程(默认生成 3000 条测试数据)

./run.sh

# 或指定生成的数据条数

./run.sh 5000

运行选项

run.sh 脚本支持以下参数:

- 无参数:使用默认值(生成 3000 条数据)

- 数字参数:指定生成的数据条数(如

./run.sh 5000)

执行逻辑

流程概览

run.sh 脚本执行以下主要步骤:

-

环境初始化

- 保留必要的配置文件(wparse.toml, wpgen.toml)

- 清理历史运行数据

- 创建必要的目录结构

- 设置符号链接(sink.d, source.d)

-

WarpParse 服务初始化

- 使用

wparse init初始化服务 - 创建权限数据库和规则映射

- 使用

-

配置与数据清理

- 清空输入输出数据目录

- 重置日志文件

- 清理救援数据目录

-

生成测试数据

- 使用

wpgen根据配置生成测试数据 - 默认生成 3000 条基准测试日志

- 数据保存到

data/in_dat/gen.dat

- 使用

-

验证输入数据

- 检查生成的数据条数

- 确保数据格式正确

-

执行数据处理

- 启动 WarpParse 引擎

- 加载 WPL/OML 模型

- 处理输入数据并分发到各 sinks

-

验证输出结果

- 检查各个 sinks 的输出文件

- 验证数据处理的完整性

- 统计处理结果

数据流向

输入数据 (data/in_dat/gen.dat)

↓

WarpParse 引擎

↓

┌─────────────┬─────────────┬─────────────┐

│ default │ miss │ residue │

│ sink │ sink │ sink │

└─────────────┴─────────────┴─────────────┘

┌─────────────┬─────────────┬─────────────┐

│ error │ monitor │ business │

│ sink │ sink │ sink │

└─────────────┴─────────────┴─────────────┘

关键处理节点

-

数据源处理

- 文件数据源:读取

gen.dat中的日志数据 - 系统日志源:实时接收系统日志(本例中未启用)

- 文件数据源:读取

-

OML 规则匹配

/benchmark*规则匹配特定格式的日志- 自动提取并处理数据

-

Sinks 分发

- default:正常处理的数据

- miss:未被规则匹配的数据

- residue:处理后的剩余数据

- error:处理过程中产生的错误

- monitor:性能和状态监控数据

- business:业务相关的处理结果

配置说明

主配置文件 (conf/wparse.toml)

version = "1.0"

robust = "normal"

[models]

wpl = "./models/wpl" # WPL 模型目录

oml = "./models/oml" # OML 模型目录

sources = "./models/sources" # 数据源配置目录

sinks = "./models/sinks" # 数据汇配置目录

[performance]

rate_limit_rps = 10000 # 速率限制(请求/秒)

parse_workers = 2 # 解析工作线程数

[rescue]

path = "./data/rescue" # 救援数据存储路径

[log_conf]

level = "warn,ctrl=info"

output = "File" # 日志输出方式

[stat]

window_sec = 60 # 统计窗口时间(秒)

数据生成器配置 (conf/wpgen.toml)

[generator]

mode = "rule" # 生成模式:rule 或 random

count = 1000 # 生成数据条数

speed = 1000 # 生成速度(条/秒)

parallel = 1 # 并行数

[output]

connect = "file_raw_sink" # 输出连接器

[output.params]

base = "data/in_dat" # 输出基准路径

file = "gen.dat" # 输出文件名

OML 规则示例 (models/oml/benchmark.oml)

name : /oml/benchmark

rule : /benchmark*

---

* : auto = take() ;

该规则定义了:

- name:规则的唯一标识符

- rule:匹配以

/benchmark开头的日志 - 动作:

take()表示提取并处理匹配的数据

Sinks 配置结构

每个 sink 配置文件包含:

version = "2.0"

[sink]

name = "default" # sink 名称

type = "file_raw_sink" # sink 类型

connect = "default_sink" # 连接器名称

[sink.params]

base = "data/out_dat" # 输出基准路径

file = "default.dat" # 输出文件名

数据源配置 (models/sources/wpsrc.toml)

支持两种数据源:

-

文件数据源(默认启用)

- 读取本地文件中的日志数据

- 适合批量处理场景

-

系统日志源(默认禁用)

- 实时接收系统日志

- 适合实时流处理场景

验证与故障排除

运行成功验证

运行完成后,可以通过以下方式验证是否成功:

- 输出文件统计

wproj data stat

== Sources ==

| Key | Enabled | Lines | Path | Error |

|-----------|---------|-------|-----------------------|-------|

| demo_file | Y | 3000 | ./data/in_dat/gen.dat | - |

Total enabled lines: 3000

== Sinks ==

business | business/out_kv | ././data/out_dat/demo.kv | 2000

business | /example//proto | ././data/out_dat/simple.dat | 1000

business | /example//kv | ././data/out_dat/simple.kv | 1000

business | /example//json | ././data/out_dat/simple.json | 1000

infras | default/[0] | ././data/out_dat/default.dat | 0

infras | error/[0] | ././data/out_dat/error.dat | 0

infras | miss/[0] | ././data/out_dat/miss.dat | 0

infras | monitor/[0] | ././data/out_dat/monitor.dat | 0

infras | residue/[0] | ././data/out_dat/residue.dat | 0

-

查看运行日志

# WarpParse 运行日志 tail -f data/logs/wparse.log # 数据生成器日志 tail -f data/logs/wpgen.log -

验证数据完整性

wproj data validate

wproj data validate

validate: PASS

Total input: 3000 (source=override)

| Sink | Actual | Expected | Lines/Denom | Verdict |

|-----------------|--------|-----------|-------------|---------|

| /example//proto | 0.333 | 0.33±0.02 | 1000/3000 | OK |

| /example//kv | 0.333 | 0.33±0.02 | 1000/3000 | OK |

| /example//json | 0.333 | 0.33±0.02 | 1000/3000 | OK |

| default/[0] | 0 | 0±0.02 | 0/3000 | OK |

| error/[0] | 0 | 0.01±0.02 | 0/3000 | OK |

| miss/[0] | 0 | [0 ~ 0.1] | 0/3000 | OK |

| monitor/[0] | 0 | [0 ~ 1] | 0/3000 | OK |

常见问题与解决方案

1. WarpParse 命令未找到

错误信息:wparse: command not found

解决方案:

- 确保 WarpParse 已正确安装

- 将 WarpParse 添加到系统 PATH 中

- 或使用完整路径运行

2. 权限不足

错误信息:Permission denied

解决方案:

chmod +x run.sh

chmod -R 755 data/

3. 数据生成失败

可能原因:

- wpgen 配置错误

- 磁盘空间不足

- 并发数设置过高

解决方案:

- 检查

conf/wpgen.toml配置 - 清理磁盘空间

- 降低 parallel 参数值

评估

Task 1

要求:

- 1、通过二进制执行命令,输入是文件,输出是文件

- 2、要求输出结果包括字段名和字段类型都完全一致

样本:

[20/Feb/2018:12:12:14 +0800] 112.195.209.90 - - "GET / HTTP/1.1" 200 190 "-" "Mozilla/5.0 (Linux; Android 6.0; Nexus 5 Build/MRA58N) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/63.0.3239.132 Mobile Safari/537.36" "-"

结果:

{

"remote_addr": "112.195.209.90",

"time_local": "2018-02-20 12:12:14",

"request": "GET / HTTP/1.1",

"status": "200",

"body_bytes_sent": 190,

"http_referer": "-",

"http_user_agent": "Mozilla/5.0 (Linux; Android 6.0; Nexus 5 Build/MRA58N) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/63.0.3239.132 Mobile Safari/537.36",

"http_x_forwarded_for": "-"

}

T2

要求

- 通过二进制执行命令,输入是 TCP,输出是文件

- 通过 wpgen 命令实现发送数据

- 要求输出结果包括字段名和字段类型都完全一致

样本:

http 2018-11-30T22:23:00.186641Z app/my-lb 192.168.1.10:2000 10.0.0.15:8080 0.01 0.02 0.01 200 200 100 200 "POST https://api.example.com/u?p=1&sid=2&t=3 HTTP/1.1" "Mozilla/5.0 (Win) Chrome/90" "ECDHE" "TLSv1.3" arn:aws:elb:us:123:tg "Root=1-test" "api.example.com" "arn:aws:acm:us:123:cert/short" 1 2018-11-30T22:22:48.364000Z "forward" "https://auth.example.com/r" "err" "10.0.0.1:80" "200" "cls" "rsn" TID_x1

结果:

{

"log_type": "http",

"timestamp": 1543616580186,

"elb": "app/my-lb",

"client_host": "192.168.1.10:2000",

"target_host": "10.0.0.15:8080",

"request_processing_time": 0.01,

"target_processing_time": 0.02,

"response_processing_time": 0.01,

"elb_status_code": "200",

"target_status_code": "200",

"received_bytes": 100,

"sent_bytes": 200,

"request_method": "POST",

"request_url": "https://api.example.com/u?p=1&sid=2&t=3",

"request_protocol": "HTTP/1.1",

"user_agent": "Mozilla/5.0 (Win) Chrome/90",

"ssl_cipher": "ECDHE",

"ssl_protocol": "TLSv1.3",

"target_group_arn": "arn:aws:elb:us:123:tg",

"trace_id": "Root=1-test",

"domain": "api.example.com",

"chosen_cert_arn": "arn:aws:acm:us:123:cert/short",

"matched_rule_priority": "1",

"request_creation_time": "2018-11-30 22:22:48.364",

"actions_executed": "forward",

"redirect_url": "https://auth.example.com/r",

"error_reason": "err",

"target_port_list": "10.0.0.1:80",

"target_status_code_list": "200",

"classification": "cls",

"classification_reason": "rsn",

"traceability_id": "TID_x1"

}

T3

要求

- 通过 docker 启动 wparse,输入是 TCP,输出是文件

- TCP通过 wpgen 命令 发送

- 要求输出结果包括字段名和字段类型都完全一致

- 需要根据 sip 和 dip 判断内外网

原文:

{"update_time":"2024-12-03 10:23:22","access_ip":"0.0.0.0","packet_data":"dXNlcjphZG1pbiBwYXNzd29yZDoxMjM0NTY=","ip":"1.1.1.1:1111->10.0.0.1:2222","attack_result": "1","log_type":"flow_ty_attack"}

结果:

{

"log_type": "flow_ty_attack",

"access_ip": "0.0.0.0",

"sip": "1.1.1.1",

"sport": 1111,

"dip": "10.0.0.1",

"dport": 2222,

"user_name": "admin",

"password": "123456",

"update_time": "2024-12-03 10:23:22",

"attack_result": "成功",

"src_zone": "External",

"dst_zone": "Internal"

}

T4

要求

- 通过 docker 启动 wparse,输入是 KAFKA,输出是 MYSQL

- KAFKA通过 wpgen 命令 发送

- 要求输出结果包括字段名和字段类型都完全一致

- 按提示完成 oml 转化

原文:

222.133.52.20 simple_chars 80 192.168.1.10 select_one left 2025-12-29 12:00:00 {"msg":"hello"} "" aGVsbG8gd29ybGQ= ["val1","val2","val3"] /home/user/file.txt http://example.com/path/to/resource?foo=1&bar=2 [{"one":{"two":"nested"}}] foo bar baz qux 500 ext_value_1 ext_value_2 <script> hello"world 12345

结果:

{

"direct_chars": "13", //直接赋值

"direct_digit": 13,

"simple_chars": "simple_chars",//直接赋值

"simple_port": 80,

"simple_ip": "192.168.1.10",

"ip_ip4_to_int": 3232235786, //ip转int(192.168.1.10)

"html_unescape": "<script>", //html转码

"html_escape": "<script>",//html转码

"str_escape": "hello\\\"world",//转义

"select_chars": "select_one", //使用option

"match_chars": "1", // left为1,right为2

"time": "2026-01-12 19:38:43.452811", //当前标准时间

"date": 20260112, //当前时间(YYYYMMDD格式)

"hour": 2026011219, //当前时间(YYYYMMDDHH格式)

"timestamp": 1766980800000, //北京时间毫秒级时间戳(使用日志中的时间2025-12-29 12:00:00)

"timestamp1": 1766980800, //秒(使用日志中的时间2025-12-29 12:00:00)

"timestamp2": 1766980800000, //毫秒(使用日志中的时间2025-12-29 12:00:00)

"timestamp3": 1766980800000000, //微秒(使用日志中的时间2025-12-29 12:00:00)

"timestamp4": 1768246851, //UTC+8秒(使用当前时间)

"timestamp5": 1768246851009, //UTC+8 毫秒(使用当前时间)

"timestamp6": 1768246851009507, //UTC+8 微秒(使用当前时间0)

"base64_en": "aGVsbG8=", //base64

"base64_de": "hello word",

"array_get": "val1", //数组取值

"array_str": "[\"val1\",\"val2\",\"val3\"]", //数组转json

"name": "file.txt", //文件路径取值

"path": "/path/to/resource", // 从全路径中获取目录路径

"domain": "example.com", //http取值

"host": "example.com",

"uri": "/path/to/resource?foo=1&bar=2",

"params": "foo=1&bar=2",

"obj": "nested", //多层取值

"splice": "foo:bar|baz:qux", //字符拼接

"num_range": "大于 0 小于 1000", //范围判断

"extends": { //扩展字段

"extend1": "ext_value_1",

"extend2": "ext_value_2"

}

}

Core Examples

This directory contains core end-to-end examples and scenario-based configurations for quickly validating parsing, routing, filtering, metrics, and verification capabilities.

Case List

| Case | Purpose | Validated Features |

|---|---|---|

| confvars_case | Configuration variables usage | Variable substitution, environment overrides |

| error_reporting | Error data reporting with multi-format output | Error routing, JSON/KV output, OML transformation |

| file_source | File-based data source ingestion | File source, batch processing |

| knowdb_case | Knowledge database queries and data association | SQL-like OML queries, CSV knowledge bases, dynamic lookup |

| oml_examples | Comprehensive OML transformation | Conditional matching, range matching, tuple matching, knowledge base queries |

| prometheus_metrics | Prometheus metrics export | HTTP /metrics endpoint, counters, gauges, histograms |

| sink_filter | Sink-level filtering and data splitting | Filter rules, multi-path routing, expectation validation |

| sink_recovery | Sink failure handling and data recovery | Rescue files, interruption/recovery cycle, replay pipeline |

| syslog_udp | UDP Syslog source integration | UDP syslog reception, parsing, routing |

| tcp_roundtrip | TCP input/output end-to-end link | TCP source/sink, data flow validation |

| wpl_missing | WPL field missing and fault tolerance | Optional fields, miss group handling, data completeness |

| wpl_pipe | WPL pipeline preprocessing | Base64 decoding, unquote/unescape, trim operations |

| wpl_success | Successful full-chain WPL parsing | Multi-rule parsing, data_type tags, routing validation |

| stat_test | Statistical testing | Statistics validation, test scenarios |

Common Directory Structure

Each case typically follows this structure:

case_name/

├── README.md # Documentation

├── run.sh # Execution script

├── conf/

│ ├── wparse.toml # Main WarpParse configuration

│ └── wpgen.toml # Data generator configuration (optional)

├── models/

│ ├── wpl/ # WPL parsing rules

│ ├── oml/ # OML transformation models

│ ├── knowledge/ # Knowledge base data (CSV/SQL)

│ └── sinks/ # Sink routing configuration

├── data/

│ ├── in_dat/ # Input data

│ ├── out_dat/ # Output data

│ └── logs/ # Processing logs

└── topology/ # Alternative structure for some cases

Quick Start

# Enter case directory

cd core/<case_name>

# Run the case

./run.sh

# Check statistics

wproj data stat

# Validate output

wproj data validate

FAQ

- Filter not working:

- Paths are resolved relative to current working directory; ensure

filter.confis accessible fromsink_root - Expressions must be parseable by TCondParser; test with simple expressions first

- Paths are resolved relative to current working directory; ensure

- Prometheus not started:

- Without configuring Prometheus connector and switching

monitorgroup to it, no/metricsendpoint will be available

- Without configuring Prometheus connector and switching

- Parameter override failed:

paramskeys must be in the connector’sallow_overridewhitelist

Convention over configuration: Always explicitly set

namefor each sink to get stablefull_nameand more readable validation reports; for filter-based cases, put filter conditions infilter.conffor reuse and review.

Core用例 (中文)

本目录收录核心端到端用例与场景化配置,便于快速验证解析、路由、过滤、度量与校验能力。

用例清单

| 用例 | 目的 | 验证特性 |

|---|---|---|

| confvars_case | 配置变量使用 | 变量替换、环境变量覆盖 |

| error_reporting | 错误数据报表与多格式输出 | 错误路由、JSON/KV 输出、OML 转换 |

| file_source | 基于文件的数据源输入 | 文件源、批处理 |

| knowdb_case | 知识库查询与数据关联 | SQL 风格 OML 查询、CSV 知识库、动态查找 |

| oml_examples | 综合 OML 转换示例 | 条件匹配、范围匹配、元组匹配、知识库查询 |

| prometheus_metrics | Prometheus 指标导出 | HTTP /metrics 端点、计数器、仪表、直方图 |

| sink_filter | Sink 级过滤与数据分流 | 过滤规则、多路径路由、期望值校验 |

| sink_recovery | Sink 故障处理与数据恢复 | 救急文件、中断/恢复流程、回放管道 |

| syslog_udp | UDP Syslog 源集成 | UDP syslog 接收、解析、路由 |

| tcp_roundtrip | TCP 输入/输出端到端链路 | TCP 源/汇、数据流验证 |

| wpl_missing | WPL 字段缺失与容错 | 可选字段、miss 组处理、数据完整性 |

| wpl_pipe | WPL 管道预处理 | Base64 解码、unquote/unescape、trim 操作 |

| wpl_success | WPL 成功解析全链路 | 多规则解析、data_type 标签、路由验证 |

| stat_test | 统计测试 | 统计验证、测试场景 |

通用目录结构

每个用例通常遵循以下结构:

case_name/

├── README.md # 文档说明

├── run.sh # 执行脚本

├── conf/

│ ├── wparse.toml # WarpParse 主配置

│ └── wpgen.toml # 数据生成器配置(可选)

├── models/

│ ├── wpl/ # WPL 解析规则

│ ├── oml/ # OML 转换模型

│ ├── knowledge/ # 知识库数据(CSV/SQL)

│ └── sinks/ # Sink 路由配置

├── data/

│ ├── in_dat/ # 输入数据

│ ├── out_dat/ # 输出数据

│ └── logs/ # 处理日志

└── topology/ # 部分用例的替代结构

快速开始

# 进入用例目录

cd core/<case_name>

# 运行用例

./run.sh

# 查看统计

wproj data stat

# 校验输出

wproj data validate

常见问题

- filter 未生效:

- 路径基于当前工作目录解析;确保

filter.conf相对sink_root可访问 - 表达式需能被 TCondParser 解析;可先用简单表达式测试

- 路径基于当前工作目录解析;确保

- Prometheus 未启动:

- 未配置 Prometheus 连接器并将

monitor组切换到该连接器时,不会有/metrics端点

- 未配置 Prometheus 连接器并将

- 覆盖参数失败:

params的键必须在连接器allow_override白名单中

约定优于配置:尽量为每个 sink 显式给出

name,以获得稳定的full_name与更可读的校验报表;对过滤型用例,请把拦截条件放在filter.conf文件,便于复用与审阅。

Configuration Variables

This example demonstrates how to use configuration variables for dynamic configuration management.

Purpose

Validate the ability to:

- Define and use configuration variables in TOML files

- Override variables via environment variables

- Apply variables across sources, sinks, and model configurations

Features Validated

| Feature | Description |

|---|---|

| Variable Substitution | Using ${VAR} syntax in configuration files |

| Environment Overrides | Overriding config values via environment variables |

| Default Values | Setting fallback values for undefined variables |

| Cross-Config References | Using variables across multiple configuration files |

Quick Start

cd core/confvars_case

# Run with default variables

./run.sh

# Run with custom environment variables

LINE_CNT=5000 STAT_SEC=5 ./run.sh

Directory Structure

confvars_case/

├── conf/wparse.toml # Main config with variable references

├── models/

│ ├── wpl/ # Parsing rules

│ ├── oml/ # Transformation models

│ └── sinks/ # Sink routing

└── data/ # Runtime data

Example Usage

# In wparse.toml

[performance]

rate_limit_rps = ${RATE_LIMIT:-500000} # Default: 500000

[log_conf]

level = "${LOG_LEVEL:-info}" # Default: info

配置使用变量 (中文)

本用例演示如何在配置中使用变量进行动态配置管理。

Error Reporting

This example demonstrates error data reporting with multi-format output for system monitoring logs.

Purpose

Validate the ability to:

- Parse skyeye_stat system monitoring logs

- Transform data using OML models

- Output to multiple formats (JSON, KV)

- Collect and analyze error data for reporting

Features Validated

| Feature | Description |

|---|---|

| WPL Parsing | Parsing system monitoring metrics (CPU, memory, disk) |

| OML Transformation | Data enrichment with take(), Time::now(), fmt(), object{} |

| Multi-format Output | JSON and KV format outputs |

| Field Collection | Using collect for dynamic field gathering |

| Pipeline Processing | Using pipe ... | base64_en for data encoding |

OML Features Demonstrated

take(): Extract fields from parsed resultsTime::now(): Get current timestampfmt(): String formatting with templateobject {}: Create nested objectscollect: Collect matching fields dynamicallypipe ... | base64_en: Pipeline processing with Base64 encoding

Quick Start

cd core/error_reporting

./run.sh

error_reporting (中文)

本用例演示“错误数据报表与多格式输出“的场景:针对 skyeye_stat 类型的系统监控日志进行解析,通过 OML 进行数据转换,并支持多种输出格式(JSON、KV 等)。适用于错误数据的收集、分析与报表生成。

目录结构

conf/:配置目录conf/wparse.toml:主配置conf/wpgen.toml:数据生成器配置

models/:规则与路由models/wpl/skyeye_stat/:skyeye_stat 解析规则models/wpl/example/simple/:示例解析规则models/oml/skyeye_stat.oml:OML 转换模型models/sinks/business.d/:业务路由models/sinks/infra.d/:基础组models/sources/wpsrc.toml:源配置

data/:运行数据目录

WPL 解析规则

skyeye_stat 规则 (models/wpl/skyeye_stat/parse.wpl)

解析 skyeye 系统监控日志,提取 CPU、内存、磁盘等系统指标:

package skyeye_stat {

#[copy_raw(name:"raw_msg")]

rule case1 {

(digit<<,>>, digit, symbol(skyeye), _,

time:updatetime\|\!, ip:sip\|\!, chars:log_type\|\![),

some_of (

json( symbol(空闲CPU百分比)@name, @value:cpu_free),

json( symbol(空闲内存kB)@name, @value:memory_free),

json( symbol(1分钟平均CPU负载)@name, @value:cpu_used_by_one_min),

// ... 更多指标

)\,

}

}

OML 转换模型

skyeye_stat.oml

对解析后的数据进行二次转换与增强:

name : skyeye_stat

rule : skyeye_stat/*

---

src_key : chars = take() ;

recv_time : time = Time::now() ;

pos_sn : chars = take() ;

updatetime : time = take() ;

sip : chars = take() ;

log_type : chars = take() ;

cust_tag : chars = fmt("[{pos_sn}-{sip}]", @pos_sn, @sip ) ;

value : obj = object {

process,memory_free : float = take() ;

cpu_free,cpu_used_by_one_min, cpu_used_by_fifty_min : float = take() ;

disk_free,disk_used,disk_used_by_one_min, disk_used_by_fify_min : float = take() ;

} ;

time_all : array = collect take( keys : [ *time* ] ) ;

raw_msg : chars = pipe take() | base64_en ;

快速使用

构建项目

cargo build --workspace --all-features

运行用例

cd core/error_reporting

./run.sh

脚本执行流程:

- 初始化环境与配置(保留 conf 以加载自定义配置)

- 使用 wpgen 生成样本数据

- 运行 wparse 批处理解析

- 校验输出统计

手动执行

# 初始化配置

wproj check

wproj data clean

wpgen data clean

# 生成样本数据

wpgen sample -n 3000 --stat 3

# 运行批处理解析

wparse batch --stat 3 -p -n 3000

# 校验输出

wproj data stat

wproj data validate

可调参数

通过环境变量覆盖:

LINE_CNT:生成行数(默认 3000)STAT_SEC:统计间隔秒数(默认 3)

业务组配置

skyeye_stat 业务组 (models/sinks/business.d/skyeye_stat.toml)

支持多种输出格式:

- JSON 格式输出

- KV 格式输出

OML 特性演示

本用例展示了以下 OML 特性:

take():从解析结果中提取字段Time::now():获取当前时间fmt():字符串格式化object {}:创建嵌套对象collect:收集匹配字段pipe ... | base64_en:管道处理与 Base64 编码

常见问题

Q1: 解析失败

- 确认输入数据格式符合 WPL 规则定义

- 查看

data/logs/wparse.log中的详细错误信息 - 检查

some_of中的可选字段是否正确匹配

Q2: OML 转换失败

- 确认 OML 中

rule匹配的 WPL package 路径正确 - 确认字段名与 WPL 解析结果一致

相关文档

File Source

This example demonstrates file-based data source ingestion for batch processing scenarios.

Purpose

Validate the ability to:

- Read input data from local files

- Process data through WPL parsing rules

- Route parsed data to configured sinks

- Handle file-based batch processing workflows

Features Validated

| Feature | Description |

|---|---|

| File Source | Reading data from local file system |

| Batch Processing | Processing files in batch mode |

| Data Routing | Routing parsed data to business/infra sinks |

Quick Start

cd core/file_source

./run.sh

Directory Structure

file_source/

├── conf/wparse.toml # Main configuration

├── models/

│ ├── wpl/ # Parsing rules

│ ├── sinks/ # Sink routing

│ └── sources/ # File source config

└── data/

├── in_dat/ # Input files

└── out_dat/ # Output files

FileSource (中文)

本用例演示基于文件的数据源输入场景,适用于批处理工作流。

KnowDB Case

This example demonstrates knowledge database (KnowDB) queries and data association for enriching parsed logs with business data.

Purpose

Validate the ability to:

- Load CSV-based knowledge bases

- Query knowledge bases using SQL-like OML syntax

- Dynamically associate parsed log data with business data

- Enrich parsed results with external lookups

Features Validated

| Feature | Description |

|---|---|

| CSV Knowledge Base | Loading data from CSV files |

| SQL-like Queries | select ... from ... where in OML |

| Dynamic Lookup | Runtime data association |

| Table Configuration | knowdb.toml for table mapping |

Knowledge Base Structure

models/knowledge/example/

├── create.sql # Table schema definition

├── data.csv # Data file

└── insert.sql # Data insertion statements

OML Query Example

# Query math score from example_score table

math_score = select math from example_score where id = read(sid);

Quick Start

cd core/knowdb_case

./run.sh

knowdb_case (中文)

本用例演示“知识库(KnowDB)查询与数据关联“的场景:通过 WPL 规则解析日志后,使用 OML 中的 select ... from ... where 语句从知识库中查询关联数据,实现日志解析与业务数据的动态关联。

目录结构

conf/:配置目录conf/wpgen.toml:数据生成器配置(UDP syslog 输出)

models/:规则与路由models/wpl/example/:WPL 解析规则(nginx 日志解析)models/knowledge/example/:知识库数据(CSV 格式)models/sinks/business.d/:业务路由models/sinks/infra.d/:基础组models/sources/wpsrc.toml:源配置(UDP syslog)

data/:运行数据目录data/in_dat/:输入数据data/out_dat/:sink 输出data/logs/:日志目录

知识库配置

知识库表定义 (models/knowledge/example/)

models/knowledge/example/

├── create.sql # 表结构定义

├── data.csv # 数据文件

└── insert.sql # 数据插入语句

示例数据 (data.csv):

name,pinying

令狐冲,linghuchong

任盈盈,renyingying

知识库配置 (models/knowledge/knowdb.toml)

定义知识库的加载路径与表映射关系。

WPL 解析规则

解析规则 (models/wpl/example/parse.wpl)

package /example {

#[tag(from_dc: "warplog/cs/nginx")]

rule nginx {

(ip:sip,2*_,time<[,]>,http/request",http/status,digit,chars",http/agent",_")

}

}

OML Examples

This example provides comprehensive OML (Object Modeling Language) transformation demonstrations covering data transformation, field mapping, conditional matching, and knowledge base queries.

Purpose

Validate the ability to:

- Transform parsed data using OML models

- Perform conditional matching with

matchexpressions - Query knowledge bases with SQL-like syntax

- Create nested objects and collect fields dynamically

Features Validated

| Feature | Description |

|---|---|

| Conditional Matching | match ... {} with pattern matching |

| Range Matching | in(digit(1), digit(3)) for value ranges |

| Tuple Matching | (read(city), read(count)) for multi-field conditions |

| Negation Matching | !chars(warp) for exclusion patterns |

| Optional Fields | take(option:[severity]) for optional handling |

| Knowledge Base Query | select ... from ... where SQL-like queries |

| Wildcard Collection | * = take() for passthrough fields |

| Object Creation | object { ... } for nested structures |

| Pipeline Processing | pipe ... | base64_en for transformations |

OML Models Included

| Model | Description |

|---|---|

| csv_example.oml | CSV data processing with conditional matching |

| skyeye_stat.oml | System monitoring data transformation |

| work_case.oml | Business case data processing |

Quick Start

cd core/oml_examples

./run.sh

oml_examples (中文)

本用例演示“OML(Object Modeling Language)转换“的多种场景:通过丰富的 OML 示例展示数据转换、字段映射、条件匹配、知识库查询等高级特性。适用于学习 OML 语法与最佳实践。

目录结构

core/oml_examples/

├── README.md # 本说明文档

├── run.sh # 一键运行脚本

├── conf/ # 配置文件目录

│ ├── wparse.toml # WarpParse 主配置

│ └── wpgen.toml # 数据生成器配置

├── models/ # 规则与模型目录

│ ├── oml/ # OML 转换模型

│ │ ├── csv_example.oml # CSV 数据处理与条件匹配

│ │ ├── skyeye_stat.oml # 系统监控数据转换

│ │ └── work_case.oml # 工作案例数据处理

│ ├── knowledge/ # 知识库数据

│ │ ├── knowdb.toml # 知识库主配置

│ │ ├── address/ # 地址信息知识库

│ │ │ ├── create.sql # 建表语句

│ │ │ ├── data.csv # 地址数据

│ │ │ └── insert.sql # 插入语句

│ │ ├── example/ # 示例数据知识库

│ │ │ ├── create.sql # 建表语句

│ │ │ ├── data.csv # 示例数据

│ │ │ └── insert.sql # 插入语句

│ │ └── example_score/ # 分数数据知识库

│ │ ├── create.sql # 建表语句

│ │ ├── data.csv # 分数数据

│ │ └── insert.sql # 插入语句

│ ├── sinks/ # 数据汇配置

│ │ ├── defaults.toml # 默认配置

│ │ ├── business.d/ # 业务路由配置

│ │ │ ├── csv_example.toml # CSV 示例输出

│ │ │ ├── skyeye_stat.toml # 系统监控输出

│ │ │ └── work_case.toml # 工作案例输出

│ │ └── infra.d/ # 基础设施配置

│ │ ├── default.toml # 默认数据汇

│ │ ├── error.toml # 错误数据处理

│ │ ├── miss.toml # 缺失数据处理

│ │ ├── monitor.toml # 监控数据处理

│ │ └── residue.toml # 残留数据处理

│ ├── sources/ # 数据源配置(空)

│ └── wpl/ # WPL 解析规则(空)

├── data/ # 运行数据目录

│ ├── in_dat/ # 输入数据目录

│ ├── out_dat/ # 输出数据目录

│ │ ├── csv_example.dat # CSV 处理结果

│ │ ├── skyeye_adm.json # SkyEye ADM 输出

│ │ ├── skyeye_pdm.json # SkyEye PDM 输出

│ │ └── work_case.json # 工作案例输出

│ └── logs/ # 日志文件目录

│ ├── gen.dat # 生成的样本数据

│ └── *.log # 各类日志文件

└── .run/ # 运行时数据目录

快速开始

运行环境要求

- WarpParse 引擎(需在系统 PATH 中)

- Bash shell 环境

运行命令

# 进入用例目录

cd core/oml_examples

# 运行完整流程(默认生成 3000 条测试数据)

./run.sh

执行逻辑

流程概览

run.sh 脚本执行以下主要步骤:

-

环境初始化

- 保留必要的配置文件(wparse.toml, wpgen.toml)

- 清理历史运行数据

- 创建必要的目录结构

- 初始化知识库数据

-

服务检查

- 使用

wproj check验证配置和模型 - 确保所有依赖项正常

- 使用

-

数据清理

- 清空输入输出数据目录

- 重置日志文件

- 清理生成器缓存

-

生成样本数据

- 使用

wpgen sample生成测试数据 - 数据包含 CSV、JSON 等多种格式

- 模拟真实的业务场景数据

- 使用

-

执行数据解析

- 启动 WarpParse 批处理模式

- 加载 OML 模型进行数据转换

- 应用知识库查询增强数据

-

验证输出结果

- 统计各输出文件的数据量

- 验证数据转换的正确性

- 检查知识库查询结果

数据流向

生成数据 (data/logs/gen.dat)

↓

WarpParse OML 引擎

↓

┌─────────────┬─────────────┬─────────────┐

│ csv_example │ skyeye_stat │ work_case │

│ 处理 │ 转换 │ 解析 │

└─────────────┴─────────────┴─────────────┘

↓ ↓ ↓

┌─────────────┬─────────────┬─────────────┐

│csv_example │skyeye_adm │work_case │

│ .dat │ .json │ .json │

└─────────────┴─────────────┴─────────────┘

└─────────────┘

skyeye_pdm

.json

OML 示例详解

1. csv_example.oml - CSV 数据处理与条件匹配

name: csv_example

rule : csv_example/*

---

occur_time = Time::now() ;

year = take();

sid = digit(10);

quart = match read(month) {

in ( digit(1) , digit(3) ) => chars(Q1);

in ( digit(4) , digit(6) ) => chars(Q2);

in ( digit(7) , digit(9) ) => chars(Q3);

in ( digit(10) , digit(12) ) => chars(Q4);

_ => chars(Q5);

};

level = match ( read(city) , read(count) ) {

( chars(cs) , in ( digit(81) , digit(200) ) ) => chars(GOOD);

( chars(cs) , in ( digit(0) , digit(80) ) ) => chars(BAD);

( chars(bj) , in ( digit(101) , digit(200) ) ) => chars(GOOD);

( chars(bj) , in ( digit(0) , digit(100) ) ) => chars(BAD);

_ => chars(NOR);

};

vender = match read(product) {

chars(warp) => chars(warp-rd)

!chars(warp) => chars(other)

};

severity: chars = match take(option:[severity]) {

digit(0) => chars(未知);

digit(1) => chars(信息);

digit(2) => chars(低危);

digit(3) => chars(高危(漏洞));

};

math_score = select math from example_score where id = read(sid) ;

* = take() ;

特性演示:

- 时间戳生成:

Time::now()获取当前时间 - 范围匹配:

in (digit(1), digit(3))匹配数值范围 - 元组匹配:

(read(city), read(count))多字段联合匹配 - 否定匹配:

!chars(warp)排除特定值 - 可选字段:

take(option:[severity])处理可选字段 - 知识库查询:

select ... from ... where动态查询数据 - 透传字段:

* = take()保留所有未处理字段

2. skyeye_stat.oml - 系统监控数据转换

name : skyeye_stat

rule : skyeye_stat/*

---

vendor = chars(wparse) ;

v_ip = ip(127.0.0.1) ;

recv_time = Time::now() ;

cust_tag = fmt("[{pos_sn}-{sip}]", @pos_sn, @sip ) ;

value = object {

process,memory_free : float = take() ;

cpu_free,cpu_used_by_one_min, cpu_used_by_fifty_min : float = take() ;

disk_free,disk_used,disk_used_by_one_min, disk_used_by_fify_min : float = take() ;

} ;

time_all = collect take( keys : [ *time* ] ) ;

raw_msg = pipe take() | base64_en ;

特性演示:

- 常量赋值:

chars(wparse)、ip(127.0.0.1)固定值 - 格式化字符串:

fmt()模板字符串 - 嵌套对象:

object { ... }创建结构化数据 - 字段收集:

collect take(keys: [*time*])动态收集字段 - 管道处理:

pipe ... | base64_en数据流处理

3. work_case.oml - 工作案例数据处理

name : work_case

rule : work_case/*

---

agent_id = take() ;

symbol = take() ;

botnet = take() ;

# 条件分支处理

symbol: chars = match read(symbol) {

chars(web_cve) => chars(Web漏洞);

chars(os_cve) => chars(系统漏洞);

_ => read(symbol);

};

# 动态路径收集

path_list = collect read(keys: [details*path]) ;

# 透传剩余字段

* = take() ;

特性演示:

- 简单字段提取:基础的数据提取

- 条件替换:基于值的字段映射

- 通配符收集:

[details*path]模糊匹配收集 - 剩余字段处理:保留所有未定义字段

配置说明

主配置文件 (conf/wparse.toml)

version = "1.0"

robust = "normal"

[models]

wpl = "./models/wpl"

oml = "./models/oml"

sources = "./models/sources"

sinks = "./models/sinks"

[performance]

rate_limit_rps = 500000 # 高速率限制,适合批处理

parse_workers = 2 # 解析工作线程数

[rescue]

path = "./data/rescue"

[log_conf]

level = "warn,ctrl=info,launch=info,source=info,sink=info,stat=info,runtime=warn,oml=warn,wpl=warn,klib=warn,orion_error=error,orion_sens=warn"

output = "File"

[stat]

window_sec = 60

数据生成器配置 (conf/wpgen.toml)

[generator]

mode = "sample" # 使用预定义样本

count = 1000 # 生成数据条数

speed = 1000 # 生成速度(条/秒)

parallel = 1 # 并发数

[output]

connect = "file_raw_sink"

[output.params]

base = "data/in_dat"

file = "gen.dat"

[log_conf]

level = "info"

output = "File"

知识库配置 (models/knowledge/knowdb.toml)

version = "2.0"

[default]

transaction = true # 启用事务

batch_size = 2000 # 批处理大小

[csv]

has_header = true # CSV 包含表头

delimiter = "," # 分隔符

encoding = "utf-8" # 编码

[table.example_score]

mapping = "header" # 按表头映射

range = "5,110" # 数据行范围

[table.address]

mapping = "index" # 按索引映射

range = "5,110" # 数据行范围

业务 Sink 配置示例 (models/sinks/business.d/csv_example.toml)

version = "2.0"

[sink]

name = "csv_example"

type = "file_csv_sink"

connect = "csv_sink"

[sink.params]

base = "data/out_dat"

file = "csv_example.dat"

[sink.expect]

basis = "total_input"

ratio = 0.7 # 期望 70% 的数据进入此 sink

deviation = 0.01 # 允许 1% 的偏差

验证

运行成功验证

-

输出文件统计

wproj data stat -

验证数据完整性

wproj data validate -

查看输出样例

# CSV 输出 head -20 data/out_dat/csv_example.dat # JSON 输出 jq . data/out_dat/skyeye_adm.json | head -40

使用不同的输出格式

配置不同的 Sink 类型:

- JSON 输出:

type = "file_json_sink" - CSV 输出:

type = "file_csv_sink" - KV 输出:

type = "file_kv_sink" - 原始输出:

type = "file_raw_sink"

本文档最后更新时间:2025-12-16

Prometheus Metrics

This example demonstrates Prometheus metrics export for monitoring system integration and performance observation.

Purpose

Validate the ability to:

- Export internal WarpParse metrics via Prometheus sink

- Expose HTTP

/metricsendpoint for scraping - Support Grafana integration for visualization

Features Validated

| Feature | Description |

|---|---|

| Prometheus Sink | Exporting metrics via Prometheus protocol |

| HTTP Endpoint | /metrics endpoint at configurable port |

| Counter Metrics | Input/output counts, error counts |

| Gauge Metrics | Queue depth, active connections |

| Histogram Metrics | Parse duration, processing latency |

Metrics Types

| Type | Examples |

|---|---|

| Counter | wparse_input_total, wparse_output_total |

| Gauge | Queue depth, active connections |

| Histogram | wparse_parse_duration_seconds |

Quick Start

cd core/prometheus_metrics

./run.sh

# Fetch metrics

curl -s http://localhost:35666/metrics

Grafana Integration

# Input rate

rate(wparse_input_total[1m])

# Output distribution by sink

sum by (sink) (wparse_output_total)

# P99 latency

histogram_quantile(0.99, wparse_parse_duration_seconds_bucket)

prometheus_metrics (中文)

本用例演示“Prometheus 指标导出“的场景:通过 Prometheus sink 导出 warp-flow 的内部运行指标,支持通过 HTTP /metrics 端点拉取指标数据。适用于监控系统集成与性能观测。

目录结构

conf/:配置目录conf/wparse.toml:主配置(含 Prometheus 导出配置)

models/:规则与路由models/wpl/:WPL 解析规则models/oml/:OML 转换模型models/sinks/business.d/:业务路由models/sinks/infra.d/:基础组(含 monitor 组)models/sources/wpsrc.toml:源配置(UDP syslog)

data/:运行数据目录

Prometheus 配置

Monitor 组配置

在 models/sinks/infra.d/monitor.toml 中配置 Prometheus sink:

[[sink_group]]

name = "/sink/infra/monitor"

connect = "prometheus_sink"

[[sink_group.sinks]]

name = "prometheus_exporter"

Prometheus 连接器

在 connectors/sink.d/ 中添加 Prometheus 连接器:

[connector]

id = "prometheus_sink"

type = "prometheus"

[connector.params]

endpoint = "127.0.0.1:35666"

快速使用

构建项目

cargo build --workspace --all-features

运行用例

cd core/prometheus_metrics

./run.sh

脚本执行流程:

- 初始化环境与配置

- 启动 wparse(syslog 接收 + Prometheus 导出)

- 等待服务启动(约 3 秒)

- 使用 wpgen 生成并发送样本数据

- 停止服务并校验输出

手动执行

# 初始化配置

wproj data clean

# 启动解析服务(后台)

wparse daemon --stat 2 -p &

# 等待服务启动

sleep 3

# 生成并发送样本

wpgen sample -n 1000 --stat 1 -p

# 拉取 Prometheus 指标

curl -s http://localhost:35666/metrics

# 停止服务

kill $(cat ./.run/wparse.pid)

# 校验输出

wproj data stat

wproj data validate --input-cnt 1000

可调参数

LINE_CNT:生成行数(默认 1000)STAT_SEC:统计间隔秒数(默认 2)

Prometheus 指标

示例指标

# HELP wparse_input_total Total number of input records

# TYPE wparse_input_total counter

wparse_input_total 1000

# HELP wparse_output_total Total number of output records by sink

# TYPE wparse_output_total counter

wparse_output_total{sink="benchmark"} 950

wparse_output_total{sink="default"} 50

# HELP wparse_parse_duration_seconds Parse duration histogram

# TYPE wparse_parse_duration_seconds histogram

wparse_parse_duration_seconds_bucket{le="0.001"} 800

wparse_parse_duration_seconds_bucket{le="0.01"} 990

wparse_parse_duration_seconds_bucket{le="+Inf"} 1000

指标类型

- Counter:输入/输出计数、错误计数

- Gauge:队列深度、活跃连接数

- Histogram:解析延迟、处理时长

Grafana 集成

添加数据源

- 在 Grafana 中添加 Prometheus 数据源

- URL:

http://localhost:35666

示例查询

# 输入速率

rate(wparse_input_total[1m])

# 输出分布

sum by (sink) (wparse_output_total)

# P99 延迟

histogram_quantile(0.99, wparse_parse_duration_seconds_bucket)

常见问题

Q1: Prometheus 端点无响应

- 确认

conf/wparse.toml中启用了 Prometheus 导出 - 确认

monitor组连接器配置为prometheus_sink - 检查端口是否被占用:

lsof -i :35666

Q2: 指标为空

- 确认 wparse 已接收到数据

- 检查

data/logs/wparse.log中的错误信息 - 确认 sinks 路由配置正确

Q3: 指标延迟

- Prometheus 指标通常有几秒的采集延迟

- 确认 scrape_interval 配置合理

相关文档

Sink Filter

This example demonstrates sink-level filtering and data splitting based on business rules.

Purpose

Validate the ability to:

- Route data to different sink paths (all/safe/residue) based on filter rules

- Configure filter expressions for conditional routing

- Validate output ratios using

defaults.expectand per-sinkexpectsettings - Ensure filter logic correctness and expected data distribution

Features Validated

| Feature | Description |

|---|---|

| Filter Rules | Conditional data routing via filter.conf |

| Multi-path Routing | Splitting data to all/safe/residue paths |

| Expectation Validation | Ratio validation with expect settings |

| Default Expectations | Group-level defaults in defaults.toml |

| Tolerance Settings | ratio, tol, min, max constraints |

Filter Configuration

# sink/defaults.toml

[defaults.expect]

basis = "total_input" # Denominator for ratio calculation

min_samples = 1

mode = "warn"

Quick Start

cd core/sink_filter

./run.sh

# Validate output ratios

wproj validate sink-file -v

sink_filter (中文)

本用例演示“按 sink 过滤/分流“的场景:依据业务规则将输入样本分发到不同的 sink 路径(all/safe/residue 等),并通过 defaults.expect 与单项 expect 对输出比例进行离线校验。适用于验证过滤逻辑正确性、残留/错误路径占比是否符合预期。

目录结构

conf/:wparse.toml(主配置)、wpsrc.toml(v2 源配置)connectors/source.d/:文件源连接器(可按需添加更多)sink/(作为sink_root)infra.d/:基础组(default/miss/residue/intercept/error/monitor)business.d/:过滤型业务组路由(示例:filter/*.toml)defaults.toml:默认期望[defaults.expect]

wpl/、oml/:规则与对象模型data/:运行输出(data/out_dat/、data/logs/等;初始化后生成)case_verify.sh:端到端校验脚本

默认期望(defaults.expect)

在 sink/defaults.toml 中设置默认组级期望(示例):

[defaults.expect]

basis = "total_input" # 以总输入作为分母校验比例

min_samples = 1

mode = "warn"

- 固定组(default/miss/residue/intercept/error)与

monitor组若未显式设置[group.expect],会继承该默认值。 - 若某个组需要自定义期望,可在该组下声明

[group.expect]覆盖默认。 - 每个 sink 的单项约束在

[group.sinks.expect]下配置:- 目标区间:

ratio + tol(表示ratio±tol) - 上下限:

min/max

- 目标区间:

过滤型业务组(示例)

- 业务组定义:

sink/filter/sink.toml - 过滤规则:

sink/filter/filter.conf(命中条件) - 常见做法:

- 主路径 sink(例如

all.dat)不设置 ratio,仅设置其他路径的上限max或设置sum_tol控制多个 ratio 的和 - 安全路径 sink(例如

safe.dat)设置较高的min,确保大部分样本进入安全路径 - 错误/残留路径设置

max,避免过高比例

- 主路径 sink(例如

快速使用

在仓库根目录构建:

cargo build --workspace --all-features

在用例目录统计/校验:

cd usecase/core/sink_filter

# 统计源与 sink(文本/JSON)

../../../target/debug/wproj stat file

../../../target/debug/wproj stat file --json

# 离线校验(文本/JSON)

../../../target/debug/wproj validate sink-file

../../../target/debug/wproj validate sink-file --json

校验提示策略(WARN)

- 当组级分母为 0(无样本)或小于

min_samples时,校验会忽略该组,但打印 WARN 提示;仅ERROR/PANIC会导致 FAIL。

端到端脚本(可选)

如需完整跑一遍生成/过滤/校验流程,可执行:

./case_verify.sh

脚本会执行构建、生成样本、启动服务与校验。若你只需要离线校验与统计,可以直接使用 wproj stat/validate。

建议:新增业务组时,统一在 sink/defaults.toml 维护 [defaults.expect],各组仅在确需与默认不同的场景下覆盖组级 expect;对单个 sink 的比例约束,请放在 [group.sinks.expect] 下设置。

Sink Recovery

This example demonstrates sink failure handling and data recovery workflows.

Purpose

Validate the ability to:

- Handle sink write failures gracefully

- Store failed data in rescue files (

rescue/directory) - Recover and replay rescue files to original target sinks

- Manage

.lockand.datfile lifecycle

Features Validated

| Feature | Description |

|---|---|

| Rescue File Generation | Creating <sink>-YYYY-MM-DD_HH:MM:SS.dat.lock on failure |

| File Lock Management | .lock suffix during write, removed on completion |

| Recovery Daemon | wprescue daemon for replaying rescue files |

| Checkpoint Tracking | rescue/recover.lock for resumption |

| Ordered Replay | Time-sorted file processing |

Recovery Workflow

- Interruption Phase: Failed writes create rescue files

- Recovery Phase:

wprescue daemonreplays rescue files to sinks - Cleanup: Successfully processed files are deleted

Quick Start

cd core/sink_recovery

# Phase 1: Generate rescue files (interrupt simulation)

./case_interrupt.sh

# Phase 2: Recover and replay

./case_recovery.sh

# Validate

wproj data stat

wproj validate sink-file -v

中断与恢复 (中文)

本用例演示 sink 中断与恢复流程:当业务 sink 写入失败时,数据会落入 rescue/ 目录;随后通过恢复流程(wprescue daemon)将救急文件回放到原目标 sink。

核心要点:

- 使用

test_rescue作为业务 sink 的后端,周期性切换可用性(约每 2 秒一次)。 - 中断阶段产生的救急文件命名形如:

<sink_name>-YYYY-MM-DD_HH:MM:SS.dat.lock;句柄释放时去掉.lock才可参与恢复。 - 恢复阶段扫描

rescue/*.dat(不含.lock),按时间顺序取最新一个文件回放,并在成功后删除该文件。

目录结构(关键项)

usecase/core/sink_recovery/conf/wparse.toml:工作目录、速率、日志、命令通道等基础配置(rescue_root = "./data/rescue")。usecase/core/sink_recovery/conf/wpsrc.toml:文件源(v2)读取./data/in_dat/gen.dat(默认)。usecase/core/sink_recovery/sink/infra.d/:基础组(default/miss/residue/intercept/error/monitor)。usecase/core/sink_recovery/sink/business.d/benchmark.toml:业务组benchmark,目标test_rescue,用于触发中断与救急文件。usecase/core/sink_recovery/case_interrupt.sh:中断阶段 e2e 脚本(生成数据 -> 启动解析 -> 观察 rescue)。usecase/core/sink_recovery/case_recovery.sh:恢复阶段 e2e 脚本(启动wprescue work-> 回放 rescue -> 校验)。

快速开始

- 中断阶段(生成救急文件)

- 进入用例目录并运行:

usecase/core/sink_recovery/case_interrupt.sh - 该脚本会:

- 预构建并初始化配置;

- 通过

wpgen sample生成 10000 行样本到./data/in_dat/gen.dat; - 启动

wparse daemon;benchmark组使用test_rescue后端,周期性中断触发救急写入; - 结束时打印

rescue/下的.dat文件及wproj stat file汇总。

- 期望:

rescue/目录出现至少一个benchmark_file_sink-*.dat文件。

- 恢复阶段(回放救急文件)

- 在同一目录运行:

usecase/core/sink_recovery/case_recovery.sh - 该脚本会:

- 启动

wprescue daemon --stat 100进入恢复模式; - 等待片刻并发送 USR1 信号优雅结束;

- 列出

rescue/、输出wproj stat file和一致性校验wproj validate sink-file -v。

- 启动

- 期望:

rescue/中的.dat文件被消费并删除;- sinks v2 下对应文件计数增加(例如

data/out_dat/benchmark.dat、data/out_dat/default.dat等)。

参考日志(logs/wprescue.log):

recover begin

recover file: ./data/rescue/benchmark_file_sink-2025-10-04_01:59:24.dat

recover begin! file : ./data/rescue/benchmark_file_sink-2025-10-04_01:59:24.dat

recover end! clean file : ./data/rescue/benchmark_file_sink-2025-10-04_01:59:24.dat

recover end

运行原理

-

中断写入与救急文件

- 业务 sink 后端为

test_rescue(见usecase/core/sink_recovery/sink/benchmark/sink.toml),通过代理定时切换健康状态; - 写入失败时,

SinkRuntime切换到备份写出(rescue),创建rescue/<sink>-YYYY-MM-DD_HH:MM:SS.dat.lock,释放句柄后重命名为.dat; - 相关实现:

- 备份切换:

src/sinks/runtime/manager.rs:120及use_back_sink/swap_back; - 文件锁/解锁:

src/sinks/backends/file.rs(.lock后缀在 Drop/stop 时去除)。

- 备份切换:

- 业务 sink 后端为

-

恢复回放

ActCovPicker周期扫描rescue/*.dat,按名称中的时间排序取最新文件;- 由文件名前缀解析 sink 名称(

get_sink_name),通过SinkRouteAgent.get_sink_agent找到对应 sink 通道; - 将每一行作为

Raw数据发送到该 sink;数据库类后端(Mysql/ClickHouse/Elasticsearch)会走to_tdc(当前为 TODO 示例); - 成功读取完文件后删除

.dat并更新检查点(断点记录rescue/recover.lock)。 - 相关实现:

src/services/collector/recovery/mod.rs。

排错建议

- 统计为 0 或无数据写入:

- 确认

rescue/存在.dat(非.lock)文件; - 确认

wprescue daemon的工作目录与用例一致(conf/wparse.toml的rescue_root为./data/rescue); - 确认业务 sink 名称与救急文件前缀一致(

benchmark_file_sink-*.dat对应[[sink_group.sinks]].name = "benchmark_file_sink"); - 如需查看恢复流程细节,查看

logs/wprescue.log中的recover关键字。

- 确认

可调参数

conf/wparse.toml:speed_limit控制恢复读取速率(每秒行数上限)。rescue_root控制救急目录。

- 脚本环境变量:

LINE_CNT、STAT_SEC可通过导出覆盖(详见脚本中默认值)。

相关文件与命令

- 运行脚本:

usecase/core/sink_recovery/case_interrupt.shusecase/core/sink_recovery/case_recovery.sh

- 核心日志:

usecase/core/sink_recovery/logs/wprescue.log - 校验工具:

wproj stat file统计 sinks 输出行数wproj validate sink-file -v校验期望配置/占比

测试完整性与健壮性建议

为保证恢复链路在不同环境、边界条件下稳定可用,建议补充如下用例与核验点:

-

场景覆盖

- 多救急文件顺序回放:在

rescue/下造多个<sink>-YYYY-MM-DD_HH:MM:SS.dat,确认按时间排序仅回放最新一个,且文件成功删除。 .lock与.dat共存:确认.lock被忽略,仅.dat参与恢复;强杀写入进程后残留.lock不影响恢复。- 空文件/空行:当前恢复读取逐行发送,建议保证救急文件无空行(与代码注释一致),并在工具侧对空行做显式跳过或报警。

- 名称不匹配:当文件前缀与 sink 名称不一致时(

get_sink_name解析),应记录错误日志并跳过;建议添加该负例用例。

- 多救急文件顺序回放:在

-

断点续传与幂等

- 中途中止

wprescue work(如kill -USR1或SIGINT),再次启动后应从rescue/recover.lock记录点续传,且已处理文件不重复回放。 - 连续执行

case_recovery.sh多次,目标 sink 的行数不应无限增长(不存在重复消费)。

- 中途中止

-

性能与压力

- 调整

conf/wparse.toml的speed_limit:分别测试低速(如 10)、高速(如 1e6)和默认值,观察吞吐、CPU、I/O。 - 大文件恢复:准备 10^5~10^6 行救急文件,验证内存占用、指标发送与最终文件删除的及时性。

- 调整

-

故障注入与恢复

test_rescue阶段错位:拉长或缩短切换周期,观察备份切换与ActMaintainer重连行为是否符合预期(warn_sink! reconnect日志)。- 目标 sink 短暂不可用:在恢复过程中手动切断写入(如文件权限只读/目录不可写),确认:失败记账、重试、最终回退策略符合鲁棒性策略(Throw/Tolerant/FixRetry 等)。

-

期望校验(expect)

-

在

sink/defaults.toml的[defaults.expect]设置min_samples/sum_tol/others_max等参数,wproj validate sink-file -v观察是否给出清晰证据(denom/ratio/lines)。- 在业务

sink.toml对单个 sink 配置[[sinks]].expect(如ratio/tol),校验实际占比是否在容差内。

- 在业务

-

观测与日志

- 将

log_conf.level临时提升至debug,grep 关键字recover begin|recover file|recover end|reconnect success,形成问题定位基线。 - 校验 monitor 指标是否随恢复进度变化(

SinkStat/pick_stat)。

- 将

-

兼容性与路径

- 确认

rescue_root、sink_root、out/等目录在不同平台(Linux/macOS)下权限与路径分隔符无差异问题。 - 业务 sink 名称与救急文件前缀严格一致(例如

benchmark_file_sink),避免路由失败。

- 确认

-

后续改进点(建议)

to_tdc(数据库类后端的 TDC 转换)当前为 TODO,补齐实现后应新增单元/集成测试验证 SQL/批量写入逻辑。- 将

test_rescue的阶段时长暴露为环境变量,便于在 CI 中构造确定性时序。 - 在 CI 中串行执行

case_interrupt.sh→case_recovery.sh,并收集wprescue.log、wproj validate结果作为工件。

TCP Roundtrip

This example demonstrates TCP input/output end-to-end data flow.

Purpose

Validate the ability to:

- Push data via TCP sink from generator

- Receive data via TCP source in parser

- Process and output to file sinks

- Verify data integrity through the TCP pipeline

Features Validated

| Feature | Description |

|---|---|

| TCP Sink | Pushing data to TCP endpoint |

| TCP Source | Receiving data from TCP port |

| End-to-End Flow | Complete data path validation |

| Data Integrity | Input/output count verification |

Quick Start

cd core/tcp_roundtrip

./run.sh

Steps

- Start the parser

wparse daemon --stat 5

- Generate data (push to TCP)

wpgen sample -n 10000 --stat 5

- Stop and validate

wproj stat sink-file

wproj validate sink-file -v --input-cnt 10000

TCP Roundtrip (中文)

目标:演示通用 TCP 输入/输出的端到端链路。

- wpgen:通过

connect = "tcp_sink"将样本数据推送到本机端口 - wparse:启用

tcp_src源监听同一端口,落地到文件 sink

步骤

- 启动解析器

wparse deamon --stat 5

- 生成数据(推送到 TCP)

wpgen sample -n 10000 --stat 5

- 停止解析器并校验

wproj stat sink-file

wproj validate sink-file -v --input-cnt 10000

关键文件

- conf/wparse.toml:主配置(sources/sinks/model 路径)

- models/sources/wpsrc.toml:source 列表(包含

tcp_src) - conf/wpgen.toml:生成器配置(输出

tcp_sink到本机端口)

WPL Missing

This example demonstrates WPL field missing tolerance and fault handling.

Purpose

Validate the ability to:

- Handle missing required fields in input data

- Process optional fields with

\|syntax - Route incomplete data to the

missinfrastructure group - Validate WPL rule fault tolerance

Features Validated

| Feature | Description |

|---|---|

| Optional Field Syntax | Using | to mark optional fields |

| Miss Group Routing | Routing records with missing required fields |

| Fault Tolerance | Continuing parsing when optional fields are absent |

| Data Completeness | Validating expected miss/success ratios |

Field Handling

| Field Type | Behavior When Missing |

|---|---|

Required (no |) | Record routes to miss group |

Optional (with |) | Parsing continues, field is empty |

| Parse Error | Record routes to residue or error group |

WPL Syntax Example

package /benchmark {

rule benchmark_1 {

(digit:id, digit:len, time, sn, chars:dev_name\|, ...)

# dev_name is optional (marked with \|)

}

}

Quick Start

cd core/wpl_missing

./run.sh

wpl_missing (中文)

本用例演示“WPL 字段缺失容错“的场景:当输入数据中某些字段不存在或解析失败时,系统如何处理缺失字段并将数据路由到相应的基础组(miss)。适用于验证 WPL 规则的容错性与数据完整性校验。

目录结构

conf/:配置目录conf/wparse.toml:主配置

models/:规则与路由models/wpl/benchmark/:WPL 解析规则parse.wpl:解析规则gen_rule.wpl:生成规则(包含缺失字段的样本)

models/sinks/business.d/:业务路由models/sinks/infra.d/:基础组(含 miss 组)models/sources/wpsrc.toml:源配置

data/:运行数据目录

WPL 容错机制

可选字段语法

在 WPL 规则中,使用 \| 标记可选字段:

package /benchmark {

rule benchmark_1 {

(digit:id, digit:len, time, sn, chars:dev_name\|, ...)

}

}

缺失字段处理

- 必需字段缺失:整条记录路由到

miss基础组 - 可选字段缺失:记录继续解析,缺失字段为空值

- 解析失败:记录路由到

residue或error基础组

解析规则示例

parse.wpl

package /benchmark {

rule benchmark_1 {

(digit:id, digit:len, time, sn, chars:dev_name, time, kv, sn,

chars:dev_name, time, time, ip, kv, chars, kv, kv, chars, kv, kv,

chars, chars, ip, chars, http/request<[,]>, http/agent")\,

}

rule benchmark_2 {

(ip:src_ip, digit:port, chars:dev_name, ip:dst_ip, digit:port,

time", kv, kv, sn, kv, ip, kv, chars, kv, sn, kv, kv, time, chars,

time, sn, kv, chars, chars, ip, chars, http/request", http/agent")\,

}

}

快速使用

构建项目

cargo build --workspace --all-features

运行用例

cd core/wpl_missing

./run.sh

脚本执行流程:

- 初始化环境与配置

- 使用 wpgen 生成包含缺失字段的样本数据

- 运行 wparse 批处理解析

- 校验输出统计(验证 miss 组有数据)

手动执行

# 初始化配置

wproj check

wproj data clean

wpgen data clean

# 生成样本数据

wpgen sample -n 1000

# 运行批处理解析

wparse batch --stat 2 -p

# 校验输出

wproj data stat

wproj data validate

可调参数

LINE_CNT:生成行数(默认 1000)STAT_SEC:统计间隔秒数(默认 2)

基础组说明

| 组名 | 用途 | 预期行为 |

|---|---|---|

| default | 默认输出 | 未路由到业务组的数据 |

| miss | 缺失字段 | 必需字段缺失的数据 |

| residue | 残留数据 | 部分解析成功的数据 |

| error | 错误数据 | 处理出错的数据 |

| monitor | 监控指标 | 系统指标输出 |

期望配置

在 models/sinks/defaults.toml 中设置期望:

[defaults.expect]

basis = "total_input"

min_samples = 1

mode = "warn"

对于 miss 组,可以设置合理的上限:

# models/sinks/infra.d/miss.toml

[[sink_group]]

name = "/sink/infra/miss"

[sink_group.expect]

max = 0.1 # 缺失数据不超过 10%

常见问题

Q1: miss 组数据过多

- 检查 WPL 规则是否与输入数据格式匹配

- 确认必需字段在输入数据中存在

- 考虑将某些字段改为可选(添加

\|标记)

Q2: 如何区分 miss 和 residue

- miss:必需字段缺失导致规则无法匹配

- residue:规则部分匹配但有残留内容

Q3: 可选字段默认值

- 可选字段缺失时默认为空值

- 可在 OML 中使用

take(option:[field])处理

相关文档

WPL Pipe

This example demonstrates WPL pipeline preprocessing for handling encoded or escaped data before parsing.

Purpose

Validate the ability to:

- Preprocess input data with pipeline operations before parsing

- Decode Base64 encoded content

- Handle quoted and escaped strings

- Chain multiple preprocessing operations

Features Validated

| Feature | Description |

|---|---|

| Pipeline Syntax | |操作| prefix for preprocessing |

| Base64 Decoding | decode/base64 operation |

| Unquote/Unescape | unquote/unescape for quoted strings |

| Trim | trim for whitespace removal |

| Operation Chaining | Left-to-right operation execution |

Pipeline Operations

| Operation | Input | Output |

|---|---|---|

decode/base64 | eyJhIjogMX0= | {"a": 1} |

unquote/unescape | "{ \"a\": 1 }" | { "a": 1 } |

trim | data | data |

WPL Syntax Example

package /pipe_demo {

rule fmt_from_base64 {

// 1) Base64 decode

// 2) Unquote and unescape

// 3) Parse JSON

|decode/base64|unquote/unescape|(json(_@_origin))

}

}

Quick Start

cd core/wpl_pipe

./run.sh

wpl_pipe (中文)

本用例演示“WPL 管道预处理“的场景:在正式解析前,通过管道操作对输入数据进行预处理(如 Base64 解码、引号转义还原等),然后再进行 JSON 或其他格式的解析。适用于处理编码、转义等复杂格式的日志数据。

目录结构

conf/:配置目录conf/wparse.toml:主配置

models/:规则与路由models/wpl/pipe_demo/:管道处理规则parse.wpl:解析规则(含管道预处理)

models/sinks/business.d/:业务路由models/sinks/infra.d/:基础组models/sources/wpsrc.toml:源配置

data/:运行数据目录

WPL 管道语法

管道操作符

在规则开头使用 |操作| 定义预处理管道:

rule example {

|操作1|操作2|...|( 解析规则 )

}

常用管道操作

decode/base64:Base64 解码unquote/unescape:去除外层引号并还原转义字符trim:去除首尾空白

解析规则示例

parse.wpl

package /pipe_demo {

#[copy_raw(name : "_origin")]

rule fmt_from_quote {

// 输入: "{ \"a\": 1, \"b\": \"foo\" }"

// 1) 去除外层引号并还原内部转义引号

// 2) 解析根级 JSON 为字段

|unquote/unescape|(json(_@_origin))

}

#[copy_raw(name : "_origin")]

rule fmt_from_base64 {

// 输入: base64("{ \"a\": 2, \"b\": \"bar\" }")

// 1) Base64 解码

// 2) 去除外层引号并还原转义

// 3) 解析 JSON 为字段

|decode/base64|unquote/unescape|(json(_@_origin))

}

}

处理流程

- 输入:

"eyJhIjogMX0="(Base64 编码的{"a": 1}) - decode/base64:

{"a": 1} - unquote/unescape:

{"a": 1}(如有引号包裹则去除) - json解析:提取

a=1

快速使用

构建项目

cargo build --workspace --all-features

运行用例

cd core/wpl_pipe

./run.sh

脚本执行流程:

- 初始化环境与配置

- 生成 Base64/转义格式的样本数据

- 运行 wparse 批处理解析

- 校验输出统计

手动执行

# 初始化配置

wproj check

wproj data clean

wpgen data clean

# 生成样本数据(Base64+引号转义 JSON)

wpgen sample -n 1000 --stat 2

# 运行批处理解析

wparse batch --stat 2 -S 1 -p -n 1000

# 校验输出

wproj data stat

wproj data validate

可调参数

LINE_CNT:生成行数(默认 1000)STAT_SEC:统计间隔秒数(默认 2)

管道操作详解

decode/base64

将 Base64 编码的字符串解码为原始内容:

输入: eyJhIjogMX0=

输出: {"a": 1}

unquote/unescape

处理带引号和转义的字符串:

输入: "{ \"a\": 1, \"b\": \"hello\" }"

输出: { "a": 1, "b": "hello" }

转义字符处理:

\"→"\\→\\n→ 换行\t→ 制表符

组合使用

多个管道操作从左到右依次执行:

|decode/base64|unquote/unescape|(...)

注解说明

copy_raw

#[copy_raw(name : "_origin")] 注解用于保留原始输入:

- 将原始输入复制到

_origin字段 - 便于后续审计或调试

常见问题

Q1: Base64 解码失败

- 确认输入是有效的 Base64 编码

- 检查是否有 URL 安全 Base64(需要不同的解码器)

- 查看

data/logs/wparse.log中的错误信息

Q2: 转义还原不完整

- 确认转义格式与

unquote/unescape支持的格式一致 - 某些特殊转义可能需要自定义处理

Q3: 管道顺序错误

- 管道从左到右执行

- 先解码(decode)再去引号(unquote)

相关文档

WPL Success

This example validates successful full-chain WPL parsing with multiple security alert log formats.

Purpose

Validate the ability to:

- Parse multiple security alert types successfully

- Apply

data_typetags via rule annotations - Route parsed data to appropriate business sinks

- Achieve high parsing success rates

Features Validated

| Feature | Description |

|---|---|

| Multi-Rule Parsing | Parsing 6+ security alert types |

| Rule Annotations | #[tag(data_type: "...")] for tagging |

| Data Type Routing | Routing based on data_type tags |

| Success Rate Validation | Verifying high parsing success rates |

Supported Alert Types

| Alert Type | data_type Tag | Description |

|---|---|---|

| webids_alert | webids_alert | Web intrusion detection alerts |

| webshell_alert | webshell_alert | Webshell detection alerts |

| ips_alert | ips_alert | Intrusion prevention system alerts |

| ioc_alert | ioc_alert | Threat intelligence alerts |

| system | system | System logs |

| audit | audit | Audit logs |

WPL Syntax Example

package /qty {

#[tag(data_type: "webids_alert")]

rule webids_alert {

(symbol(webids_alert), chars:serialno, chars:rule_id, ...)

}

#[tag(data_type: "audit")]

rule audit {

(kv(chars@username), kv(chars@serialno), ...)

}

}

Quick Start

cd core/wpl_success

./run.sh

wpl_success (中文)

本用例演示“WPL 成功解析全链路“的场景:验证 WPL 规则能够成功解析多种安全告警日志格式(webids_alert、webshell_alert、ips_alert、ioc_alert、system、audit 等),并正确路由到业务 sink。适用于验证解析规则的正确性与完整性。

目录结构

conf/:配置目录conf/wparse.toml:主配置

models/:规则与路由models/wpl/qty_alert/:安全告警解析规则parse.wpl:多种告警类型的解析规则

models/oml/:OML 转换模型models/sinks/business.d/:业务路由models/sinks/infra.d/:基础组models/sources/wpsrc.toml:源配置

data/:运行数据目录out/:输出目录

支持的告警类型

解析规则 (models/wpl/qty_alert/parse.wpl)

本用例支持以下安全告警类型:

| 告警类型 | data_type 标签 | 说明 |

|---|---|---|

| webids_alert | webids_alert | Web 入侵检测告警 |

| webshell_alert | webshell_alert | Webshell 检测告警 |

| ips_alert | ips_alert | 入侵防护系统告警 |

| ioc_alert | ioc_alert | 威胁情报告警 |

| system | system | 系统日志 |

| audit | audit | 审计日志 |

规则示例

package /qty {

#[tag(data_type: "webids_alert")]

rule webids_alert {

(symbol(webids_alert), chars:serialno, chars:rule_id, chars:rule_name,

time_timestamp:write_date, chars:vuln_type, ip:sip, digit:sport,

ip:dip, digit:dport, digit:severity, chars:host, chars:parameter,

chars:uri, chars:filename, chars:referer, chars:method, chars:vuln_desc,

time:public_date, chars:vuln_harm, chars:solution, chars:confidence,

chars:victim_type, chars:attack_flag, chars:attacker, chars:victim,

digit:attack_result, chars:kill_chain, chars:code_language,

time:loop_public_date, chars:rule_version, chars:xff, chars:vlan_id,

chars:vxlan_id)\|\!

}

#[tag(data_type: "audit")]

rule audit {

(kv(chars@username), kv(chars@serialno), kv(chars@submod),

kv(chars@detail), kv(time@updatetime), kv(ip@ip), kv(chars@sub2),

kv(chars@log_type), kv(chars@module), kv(digit@sub_type))\|\!

}

// ... 更多规则

}

规则注解

#[tag(data_type: "...")]:为解析结果添加数据类型标签,用于后续路由分发

快速使用

构建项目

cargo build --workspace --all-features

运行用例

cd core/wpl_success

./run.sh

脚本执行流程:

- 初始化环境与配置

- 使用 wpgen 生成多种告警类型的样本数据

- 验证输入文件存在

- 运行 wparse 批处理解析

- 校验输出统计与期望

手动执行

# 初始化配置

wproj check

wproj data clean

wpgen data clean

# 生成样本数据

wpgen sample -n 3000 --stat 3

# 验证输入文件

test -s "./data/in_dat/gen.dat" || echo "missing input file"

# 运行批处理解析

wparse batch --stat 3 -p -n 3000

# 校验输出

wproj data stat

wproj data validate

可调参数

LINE_CNT:生成行数(默认 3000)STAT_SEC:统计间隔秒数(默认 3)

期望配置

成功解析期望

本用例的目标是验证解析成功率,期望配置示例:

[defaults.expect]

basis = "total_input"

min_samples = 100

mode = "warn"

# 业务组期望高成功率

[sink_group.expect]

ratio = 0.95 # 期望 95% 的数据成功解析

tol = 0.05 # 容差 ±5%

基础组期望

# miss 组期望较低

[sink_group.expect]

max = 0.05 # 缺失数据不超过 5%

# error 组期望为 0

[sink_group.expect]

max = 0.01 # 错误数据不超过 1%

字段提取说明

必需字段(无 \| 标记)

缺失时整条记录进入 miss 组

可选字段(带 \|! 标记)

缺失时记录继续解析,字段为空

KV 格式解析

kv(chars@username) # 解析 key=value 格式,提取 username

kv(time@updatetime) # 解析 key=value 格式,提取时间类型的 updatetime

常见问题

Q1: 解析成功率低

- 检查样本数据格式是否与 WPL 规则匹配

- 确认字段分隔符与规则定义一致

- 查看

data/logs/wparse.log中的解析错误

Q2: 某种告警类型未匹配

- 确认告警类型前缀正确(如

symbol(webids_alert)) - 检查字段数量与顺序是否一致

Q3: 数据标签未生效

- 确认规则注解格式正确:

#[tag(data_type: "...")] - 确认 OML/Sink 路由配置使用了对应标签

相关文档

Extension Connectors

This directory contains extension connector examples demonstrating WarpParse integration with various external systems (databases, message queues, log storage, monitoring).

Case List

| Case | Purpose | Validated Features |

|---|---|---|

| doris | File Source → Doris Stream Load pipeline | Doris sink, Stream Load, batch processing |

| kafka | Kafka Source/Sink integration | Kafka consumer/producer, topic routing |

| practice | Real-world multi-source monitoring scenario | Multi-source collection, Fluent-bit, Kafka, VictoriaLogs, Grafana |

| tcp_mysql | TCP Source → MySQL Sink pipeline | TCP source, MySQL sink, data persistence |

| tcp_victorialogs | TCP Source → VictoriaLogs Sink pipeline | TCP source, VictoriaLogs sink, log storage |

| victoriametrics | Internal metrics push to VictoriaMetrics | VictoriaMetrics sink, metrics export, monitoring |

Common Structure

case_name/

├── conf/

│ ├── wparse.toml # Engine configuration

│ └── wpgen.toml # Data generator configuration

├── topology/

│ ├── sources/ # Source definitions

│ └── sinks/ # Sink definitions

├── models/

│ ├── wpl/ # WPL parsing rules

│ └── oml/ # OML transformation models

├── data/ # Runtime data

├── docker-compose.yml # Container orchestration

└── run.sh # Execution script

Quick Start

# Enter case directory

cd extensions/<case_name>

# Start dependent services

docker compose up -d

# Run the case

./run.sh

扩展连接器 (中文)

本目录包含扩展连接器示例,演示 WarpParse 与各种外部系统(数据库、消息队列、日志存储、监控)的集成。

用例清单

| 用例 | 目的 | 验证特性 |

|---|---|---|

| doris | 文件源 → Doris Stream Load 管道 | Doris sink、Stream Load、批处理 |

| kafka | Kafka 源/汇集成 | Kafka 消费者/生产者、topic 路由 |

| practice | 实战多源监控场景 | 多源采集、Fluent-bit、Kafka、VictoriaLogs、Grafana |

| tcp_mysql | TCP 源 → MySQL Sink 管道 | TCP 源、MySQL sink、数据持久化 |

| tcp_victorialogs | TCP 源 → VictoriaLogs Sink 管道 | TCP 源、VictoriaLogs sink、日志存储 |

| victoriametrics | 内部指标推送到 VictoriaMetrics | VictoriaMetrics sink、指标导出、监控 |

通用结构

case_name/

├── conf/

│ ├── wparse.toml # 引擎配置

│ └── wpgen.toml # 数据生成器配置

├── topology/

│ ├── sources/ # 源定义

│ └── sinks/ # 汇定义

├── models/

│ ├── wpl/ # WPL 解析规则

│ └── oml/ # OML 转换模型

├── data/ # 运行时数据

├── docker-compose.yml # 容器编排

└── run.sh # 执行脚本

快速开始

# 进入用例目录

cd extensions/<case_name>

# 启动依赖服务

docker compose up -d

# 运行用例

./run.sh

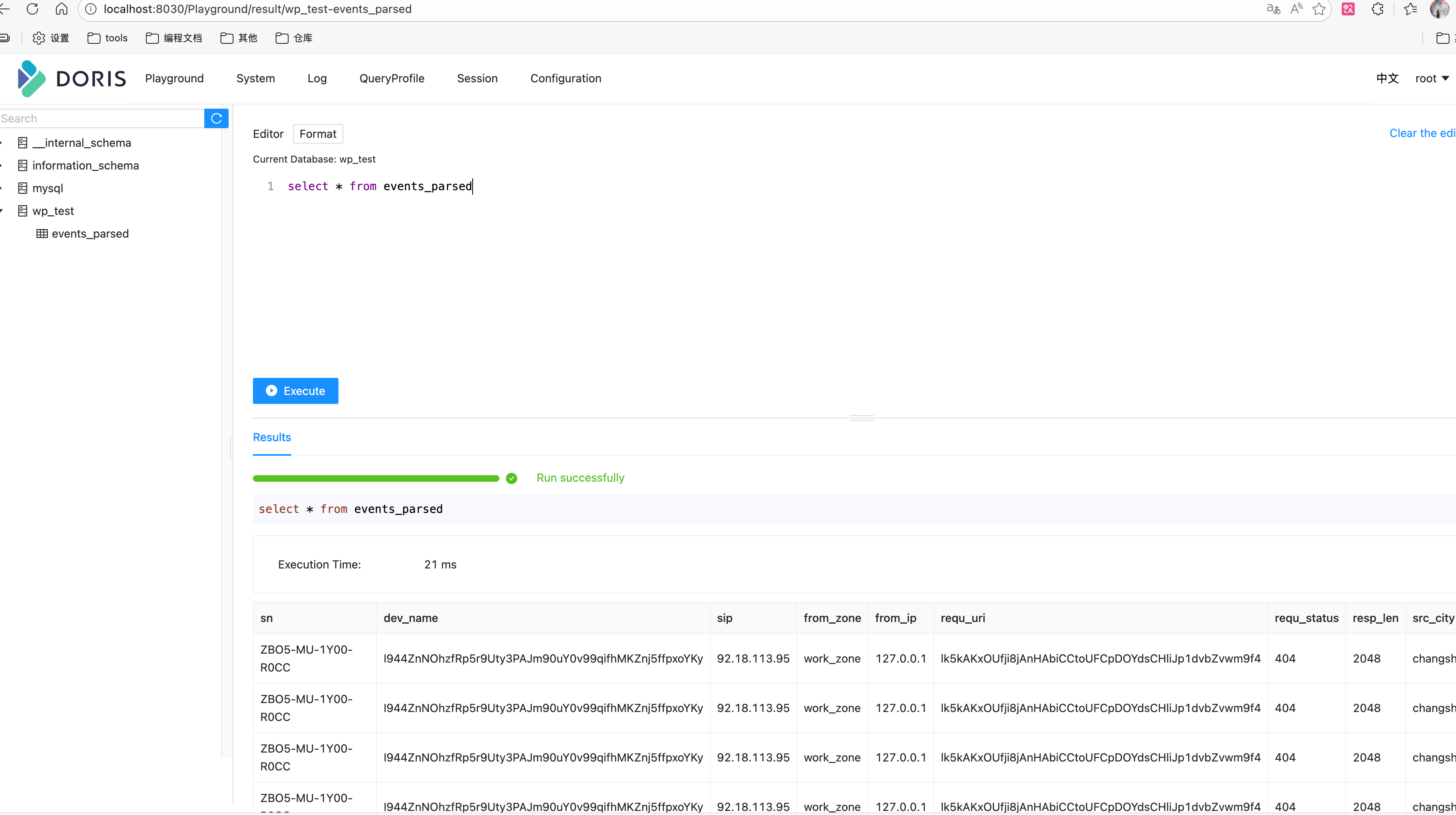

doris 使用说明

前提

- 启动docker compose:

docker compose up -d - 等待doris启动后,创建test.sql中的库表

- 查看内容:

- 进入:http://localhost:8030/Playground/result/wp_test-events_parsed页面

- 执行查询语句:select * from events_parsed

配置介绍

[connectors.params]

# Stream Load API 配置(新版)

endpoint = "http://localhost:8040" # 使用 BE 的 HTTP 端口(推荐)

user = "root" #用户名

password = "" #密码

database = "test_db" #数据库

table = "events_parsed" # 表名

timeout_secs = 30 # 超时时间

max_retries = 3 # 重试次数

batch_size = 100_0000 # 通用参数 批量大小

# 可选:自定义 Stream Load 参数

[connectors.params.headers]

strip_outer_array = "false"

max_filter_ratio = "0.1"

strict_mode = "false"

Kafka

This directory provides an end-to-end Kafka validation case to verify the unified Kafka Source/Sink connectors work as expected.

- Producer:

wpgenwrites sample data to Kafka (input topic, defaultwp.testcase.events.raw) - Engine:

wparsereads from Kafka input, parses and routes to multiple sinks (including Kafka output topic, defaultwp.testcase.events.parsed, and a file sink) - Optional verification: Use

wpkit kafka consumeto verify messages on the Kafka output topic

Data Flow

The diagram below shows the data flow and key components (wp.testcase.events.raw/wp.testcase.events.parsed).

flowchart LR

subgraph Producer

WPGEN[wpgen sample]\n(按 wpgen.toml 写 Kafka)

end

subgraph Kafka

KAFKA_IN[(KAFKA_INPUT_TOPIC)]

KAFKA_OUT[(KAFKA_OUTPUT_TOPIC)]

end

subgraph Engine

WPARSE[wparse batch\n(-n 限制条数自动退出)]

SINKS{{Sink Group\n(models/sink)}}

OML[OML 映射/脱敏]

end

subgraph Verifier

FILE[file sink: events.parsed.prototext]

CONSUME[wpkit kafka consume\n(可选验证)]

end

WPGEN -- produce --> KAFKA_IN

WPARSE -- consume --> KAFKA_IN

WPARSE -- route --> OML --> SINKS

SINKS -- write --> FILE

SINKS -- produce --> KAFKA_OUT

CONSUME -- verify --> KAFKA_OUT

If Mermaid is not supported, refer to the ASCII version:

wpgen(sample) --> Kafka(KAFKA_INPUT_TOPIC) --> wparse(batch) --> [OML/route] --> sinks{file,kafka}

sinks --> file: data/out_dat/events.parsed.prototext

sinks --> Kafka(KAFKA_OUTPUT_TOPIC) --> (optional) wpkit kafka consume verification

Directory Structure

conf/wparse.toml: Engine main config (directories/concurrency/logging, etc.)wpgen.toml: Data generator config (points to Kafka sink, overrides input topic)

topology/source/wpsrc.toml: Source routing (contains two[[sources]]:kafka_inputsubscribes to input topic;kafka_output_tapsubscribes to output topic for self-testing/demo, can be disabled as needed)topology/sink/business.d/example.toml: Business sink routing (contains a file sink and a Kafka sink)models/oml/...: OML models (result field mapping/masking)case_verify.sh: One-click verification script (startswparse→wpgensends → verification)

Note: Source and Sink connector IDs reference definitions in the repository root connectors/ directory:

connectors/source.d/30-kafka.toml: id=kafka_src(allows overridingtopic/group_id/config)connectors/sink.d/30-kafka.toml: id=kafka_sink(allows overridingtopic/config/num_partitions/replication/brokers/fmt)

Prerequisites

- Kafka running locally, default address

localhost:9092(or override via environment variables, see below)

Quick Start

Enter the case directory and run the script (default debug):

cd extensions/wp-connectors/testcase

./case_verify.sh # or ./case_verify.sh release

Main script steps:

- Clean run directory (preserving

conf/templates), build binaries totarget/<profile>, add toPATH wpkit conf checkfor config self-check; clean data directory- Start

wparsein background (-nlimits processing count, auto-exits on completion) - Run

wpgen sampleto generate sample data and write to Kafka input topic - Wait for

wparseto exit and perform file sink verification (optional)

Parameters

The script supports the following optional environment variables:

PROFILE: Build and run profile (debug|release), defaultdebugLINE_CNT: Number of sample records to generate/process, default3000STAT_SEC: Statistics print interval (seconds), default3KAFKA_BOOTSTRAP_SERVERS: Kafka address, defaultlocalhost:9092KAFKA_INPUT_TOPIC: Input topic (wpgenwrites,wparseconsumes), defaultwp.testcase.events.rawKAFKA_OUTPUT_TOPIC: Output topic (wparseKafka sink writes), defaultwp.testcase.events.parsed

Example:

KAFKA_BOOTSTRAP_SERVERS=127.0.0.1:9092 KAFKA_INPUT_TOPIC=my_in KAFKA_OUTPUT_TOPIC=my_out ./case_verify.sh

Result Verification

- File Sink: The script runs

wpkit stat fileandwpkit validate sink-file -v; output is available indata/out_dat/asevents.parsed.prototext(permodels/sink/business.d/example.tomlfile sink config) - Kafka Output: Optionally run the following command to view output topic (recommend using a fresh group to avoid messages being consumed by other consumers)

wpkit kafka consume --brokers ${KAFKA_BOOTSTRAP_SERVERS:-localhost:9092} \

--group wpkit-consume-$$ \

--topic "${KAFKA_OUTPUT_TOPIC:-wp.testcase.events.parsed}"

Kafka (中文)

本目录提供一套基于 Kafka 的端到端校验用例,验证统一 Kafka Source/Sink 连接器是否按预期工作。

- 发送端:

wpgen将样例数据写入 Kafka(输入 topic,默认wp.testcase.events.raw) - 引擎端:

wparse读取 Kafka 输入,解析并路由到多个 Sink(其中包含 Kafka 输出 topic,默认wp.testcase.events.parsed,以及一个文件型 Sink) - 可选校验:使用

wpkit kafka consume验证 Kafka 输出 topic 的消息

数据流图

下图展示 testcase 的数据流与关键环节(wp.testcase.events.raw/wp.testcase.events.parsed)。

flowchart LR

subgraph Producer

WPGEN[wpgen sample]\n(按 wpgen.toml 写 Kafka)

end

subgraph Kafka

KAFKA_IN[(KAFKA_INPUT_TOPIC)]

KAFKA_OUT[(KAFKA_OUTPUT_TOPIC)]

end

subgraph Engine

WPARSE[wparse batch\n(-n 限制条数自动退出)]

SINKS{{Sink Group\n(models/sink)}}

OML[OML 映射/脱敏]

end

subgraph Verifier

FILE[file sink: events.parsed.prototext]

CONSUME[wpkit kafka consume\n(可选验证)]

end

WPGEN -- produce --> KAFKA_IN

WPARSE -- consume --> KAFKA_IN

WPARSE -- route --> OML --> SINKS

SINKS -- write --> FILE

SINKS -- produce --> KAFKA_OUT

CONSUME -- verify --> KAFKA_OUT

如渲染不支持 Mermaid,可参考 ASCII 版:

wpgen(sample) --> Kafka(KAFKA_INPUT_TOPIC) --> wparse(batch) --> [OML/route] --> sinks{file,kafka}

sinks --> file: data/out_dat/events.parsed.prototext

sinks --> Kafka(KAFKA_OUTPUT_TOPIC) --> (optional) wpkit kafka consume 验证

目录结构

conf/wparse.toml:引擎主配置(目录/并发/日志等)wpgen.toml:数据生成器配置(已指向 Kafka sink,并覆写输入 topic)

topology/source/wpsrc.toml:Source 路由(包含两个[[sources]]:kafka_input订阅输入 topic;kafka_output_tap订阅输出 topic,用于自测/演示,可按需关闭)topology/sink/business.d/example.toml:业务 Sink 路由(包含一个文件型 sink 与一个 Kafka sink)models/oml/...:OML 模型(结果字段映射/脱敏)case_verify.sh:一键校验脚本(启动wparse→wpgen发送 → 校验)

说明:Source 与 Sink 连接器 id 引用仓库根目录 connectors/ 下的定义:

connectors/source.d/30-kafka.toml:id=kafka_src(允许覆写topic/group_id/config)connectors/sink.d/30-kafka.toml:id=kafka_sink(允许覆写topic/config/num_partitions/replication/brokers/fmt)

前置要求

- 本机已启动 Kafka,默认地址

localhost:9092(或通过环境变量覆盖,见下文)

快速开始

进入用例目录并运行脚本(默认 debug):

cd extensions/wp-connectors/testcase

./case_verify.sh # 或 ./case_verify.sh release

脚本主要步骤:

- 清理运行目录(保留

conf/模板)并构建二进制到target/<profile>,加入PATH wpkit conf check进行配置自检;清理数据目录- 后台启动

wparse(-n限制处理条数,完成后自动退出) - 执行

wpgen sample生成样例数据并写入 Kafka 输入 topic - 等待

wparse退出并进行文件型 sink 校验(可选)

运行参数

脚本支持以下可选环境变量:

PROFILE:构建与运行的 profile(debug|release),默认debugLINE_CNT:生成/处理的样例条数,默认3000STAT_SEC:统计打印间隔(秒),默认3KAFKA_BOOTSTRAP_SERVERS:Kafka 地址,默认localhost:9092KAFKA_INPUT_TOPIC:输入 topic(wpgen写入、wparse消费),默认wp.testcase.events.rawKAFKA_OUTPUT_TOPIC:输出 topic(wparse的 Kafka sink 写入),默认wp.testcase.events.parsed

示例:

KAFKA_BOOTSTRAP_SERVERS=127.0.0.1:9092 KAFKA_INPUT_TOPIC=my_in KAFKA_OUTPUT_TOPIC=my_out ./case_verify.sh

结果验证

- 文件型 Sink:脚本会执行

wpkit stat file与wpkit validate sink-file -v,在data/out_dat/下可见events.parsed.prototext(按models/sink/business.d/example.toml的文件型 sink 配置) - Kafka 输出:可选执行以下命令查看输出 topic(建议使用全新 group,以免被其他消费者读走)

wpkit kafka consume --brokers ${KAFKA_BOOTSTRAP_SERVERS:-localhost:9092} \

--group wpkit-consume-$$ \

--topic "${KAFKA_OUTPUT_TOPIC:-wp.testcase.events.parsed}"

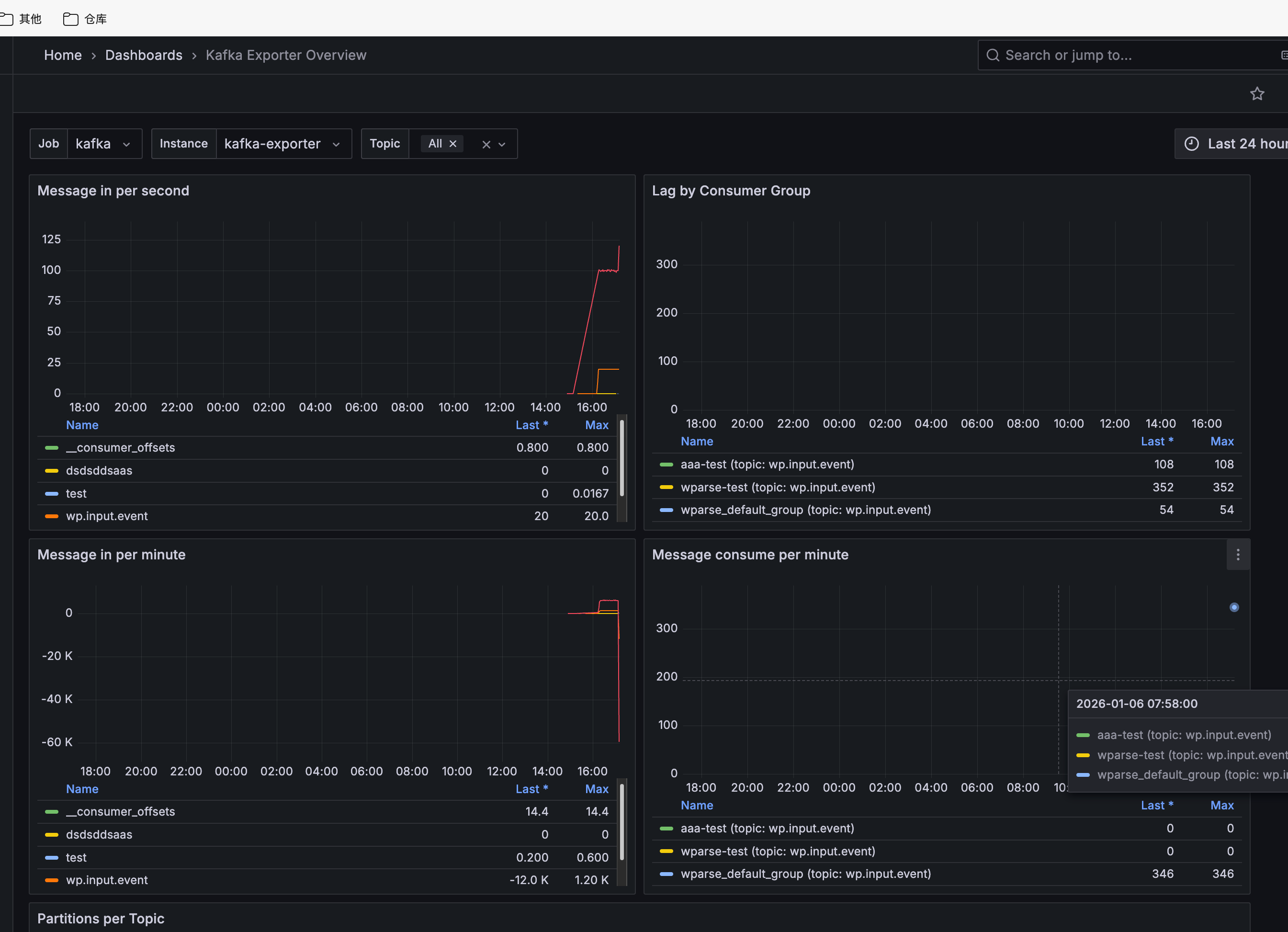

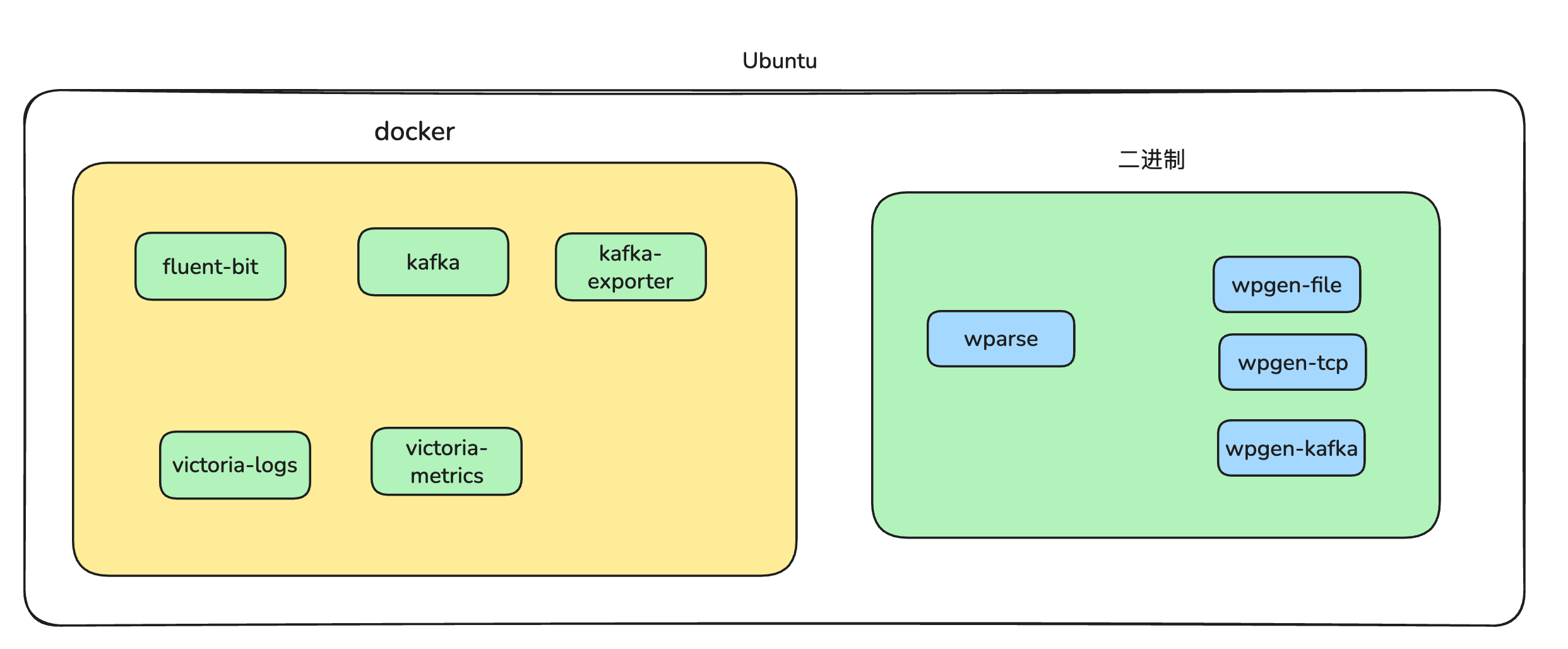

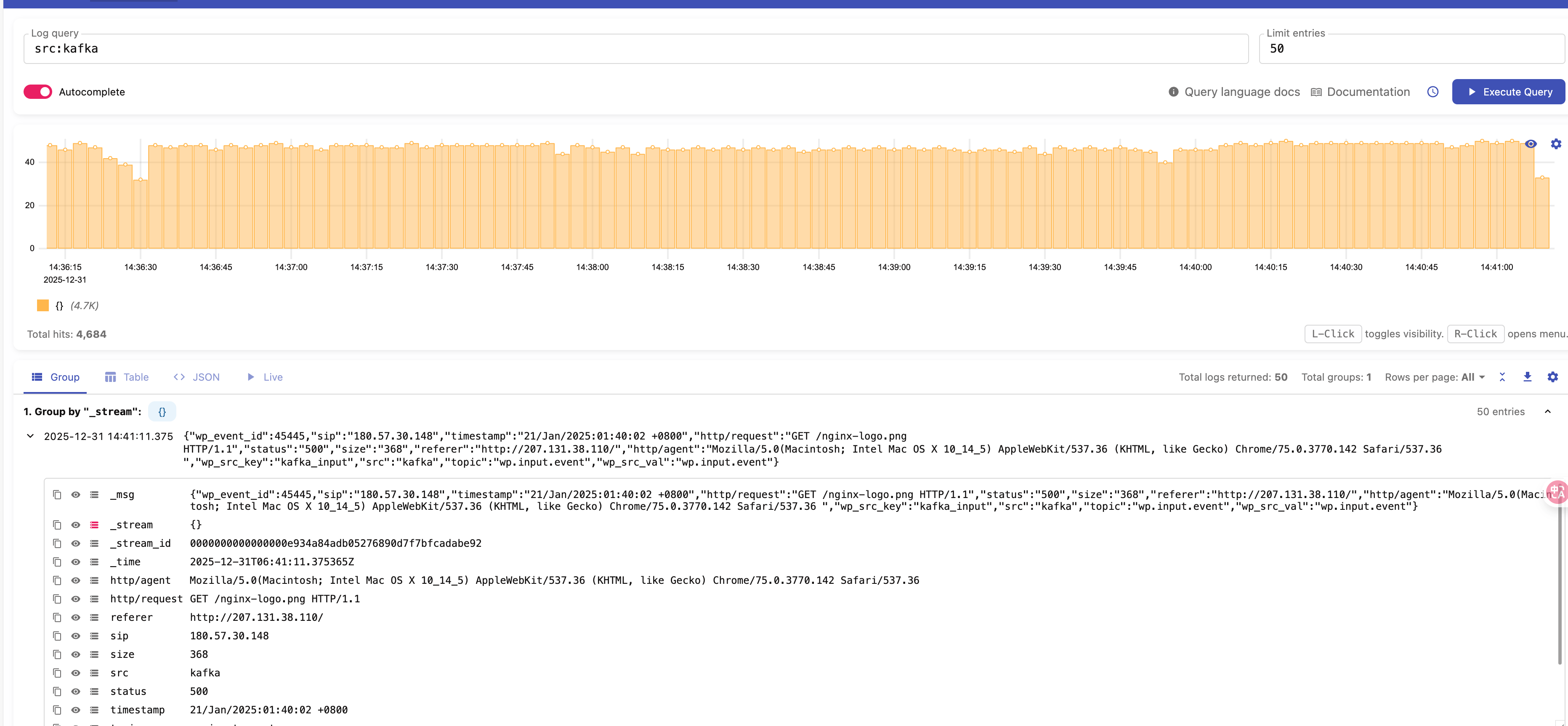

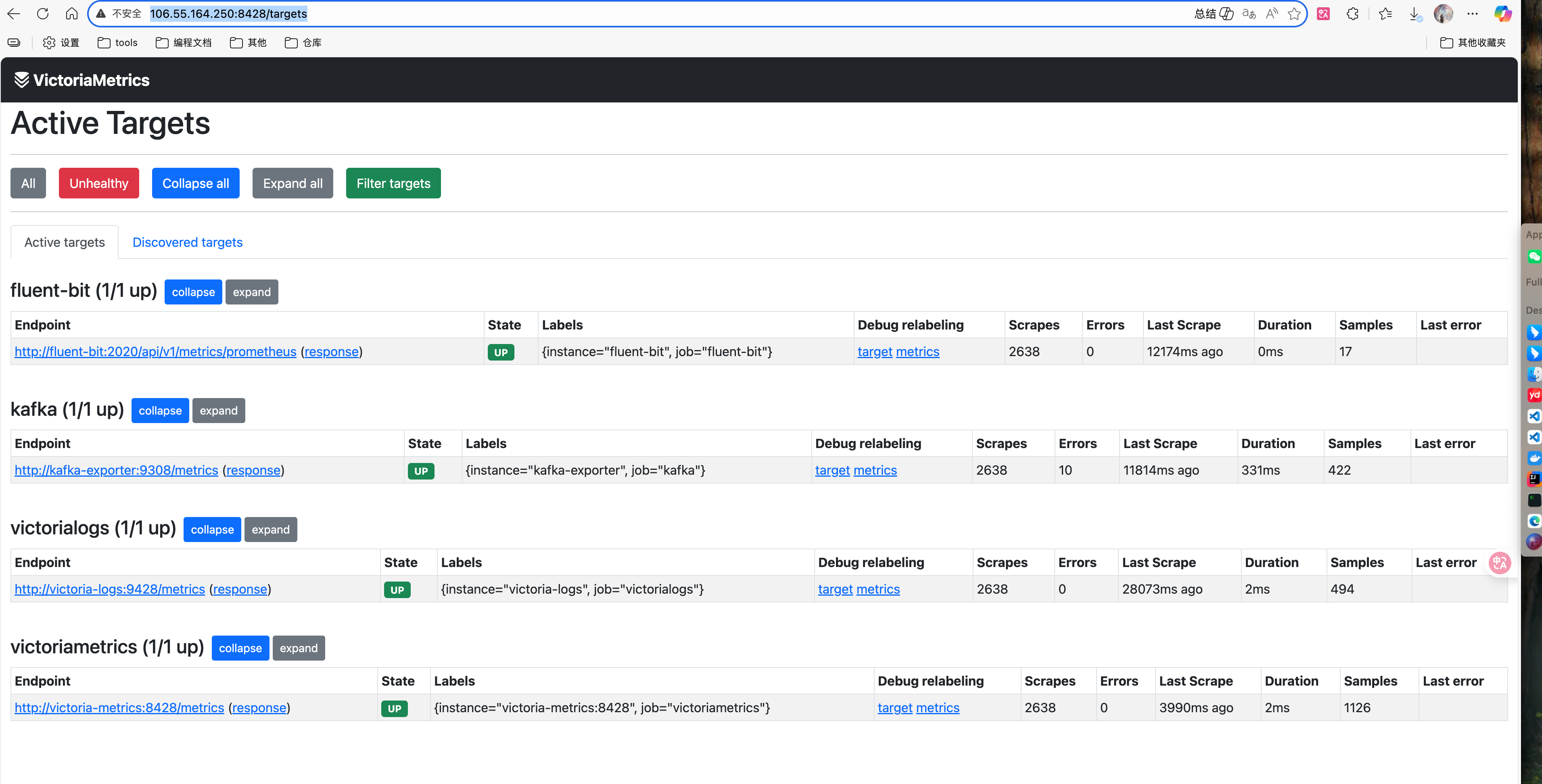

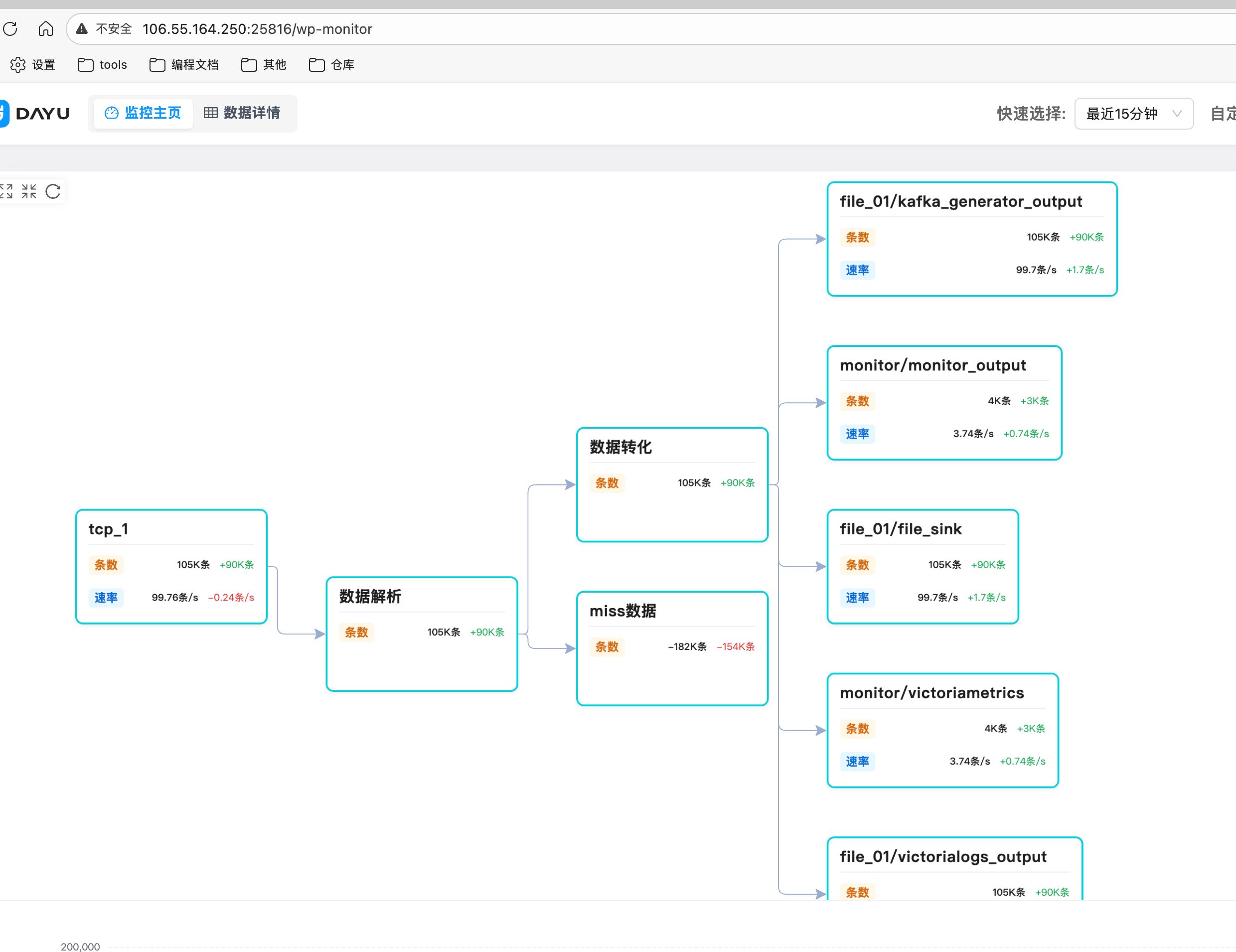

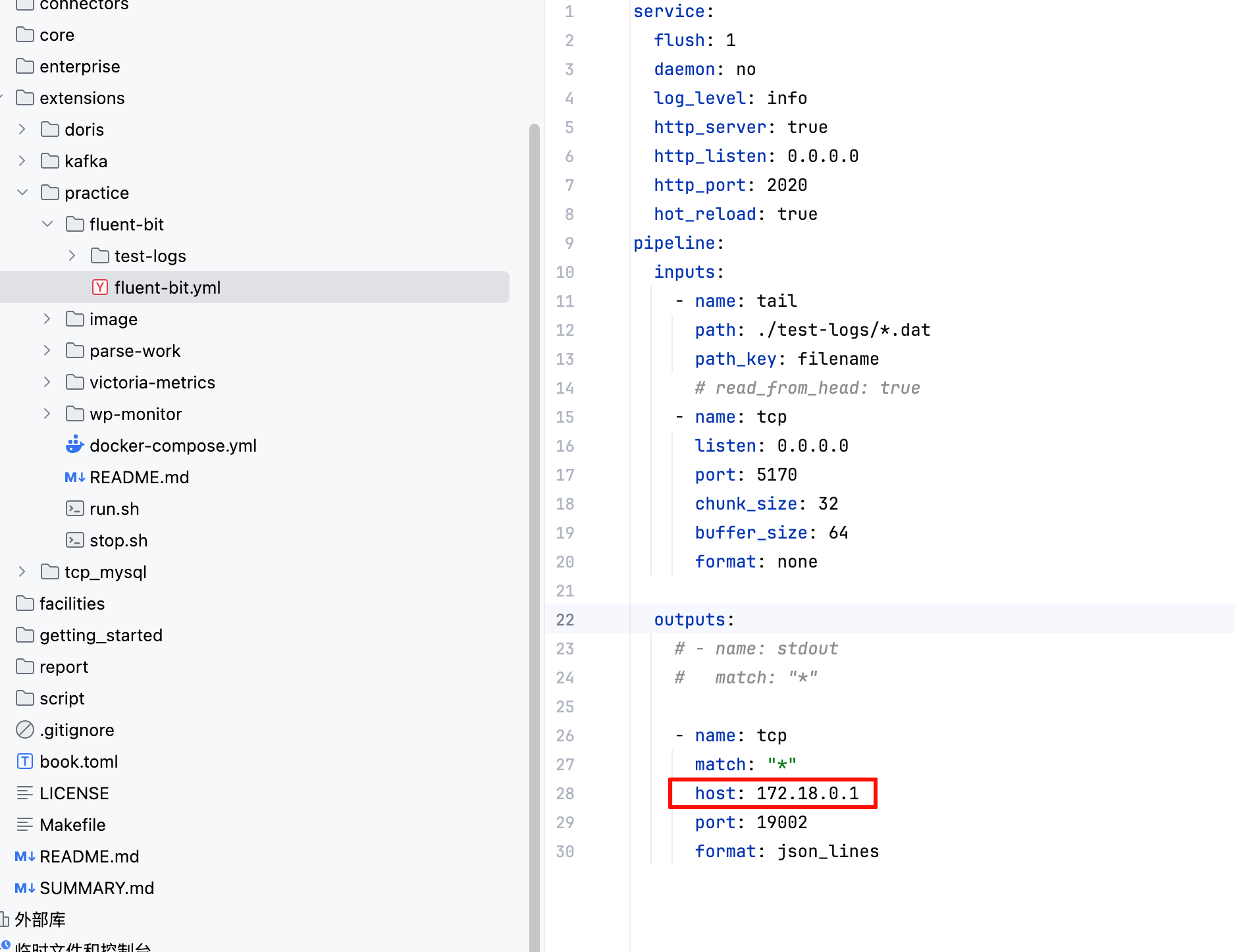

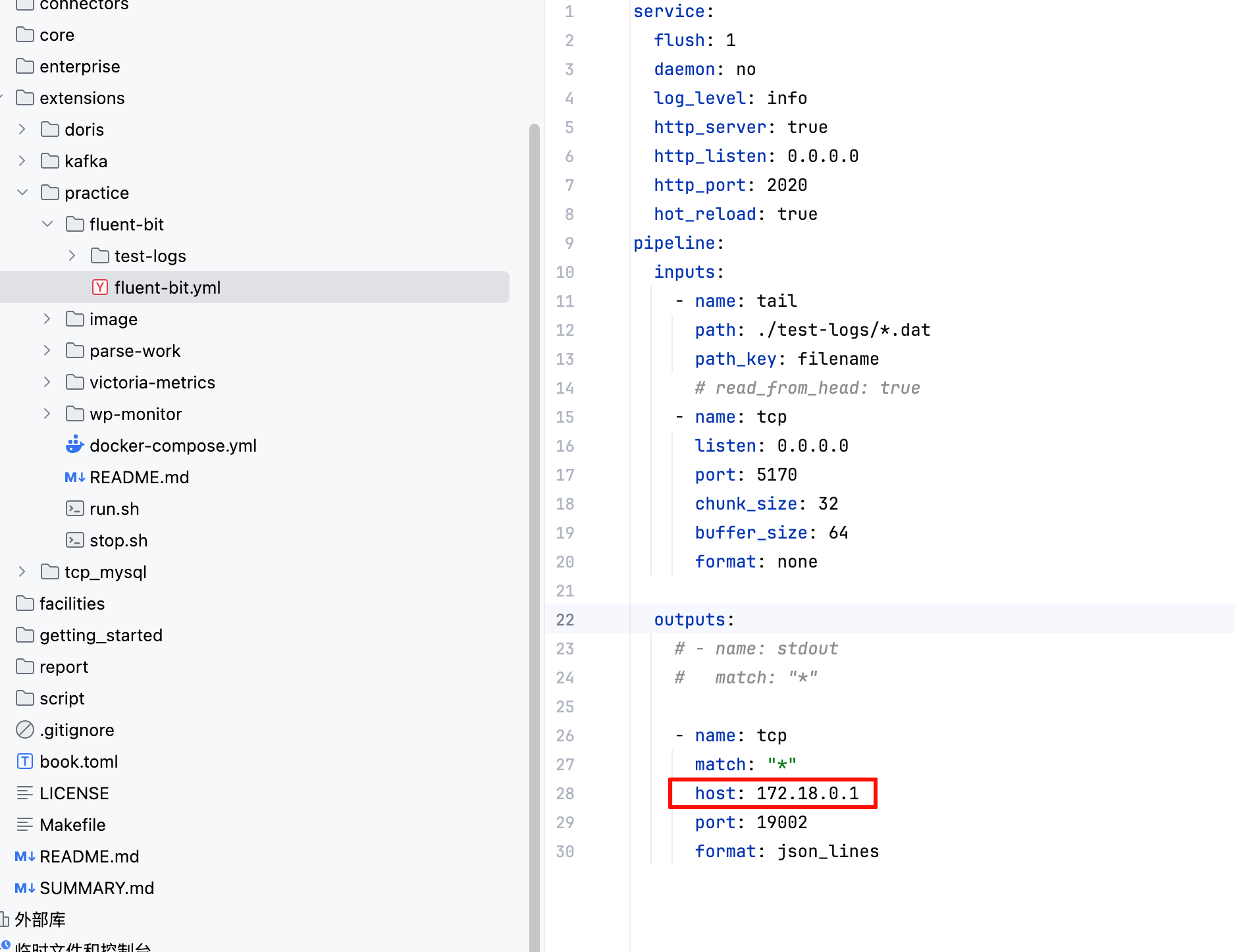

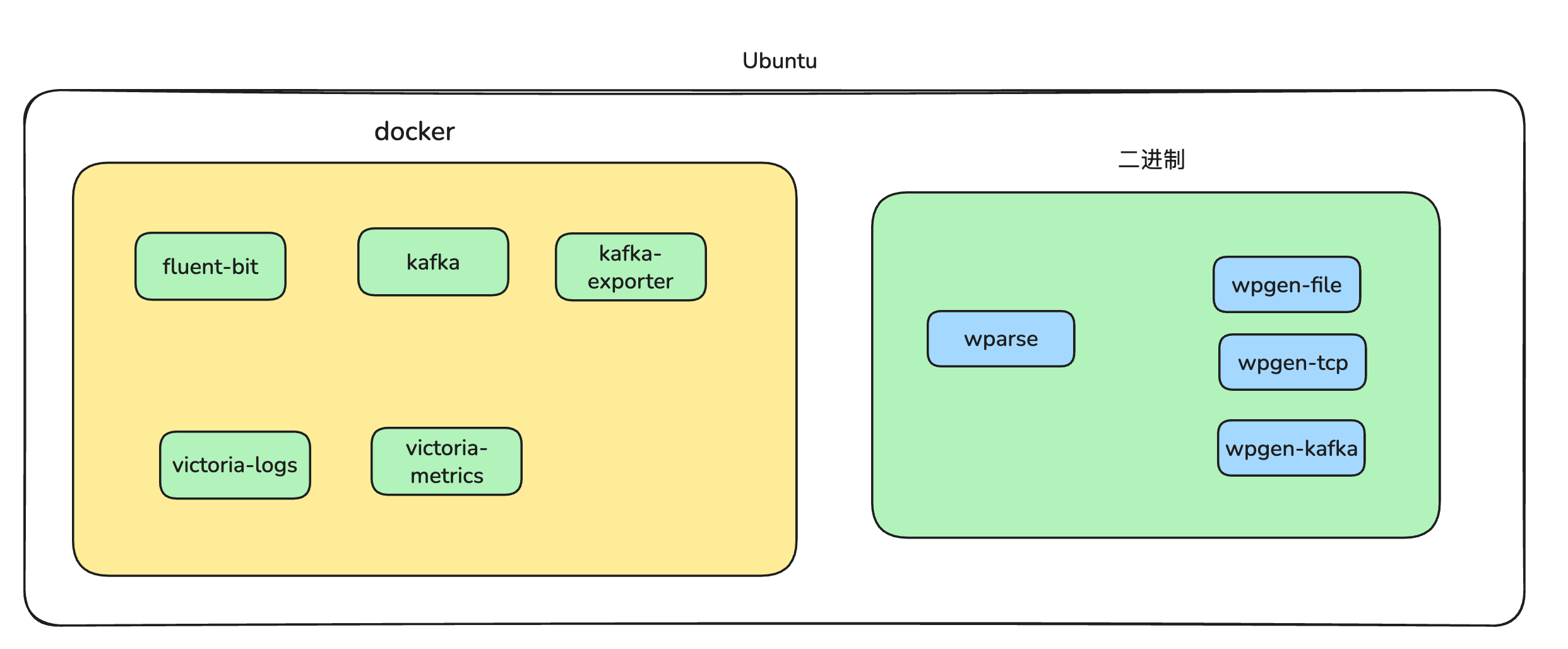

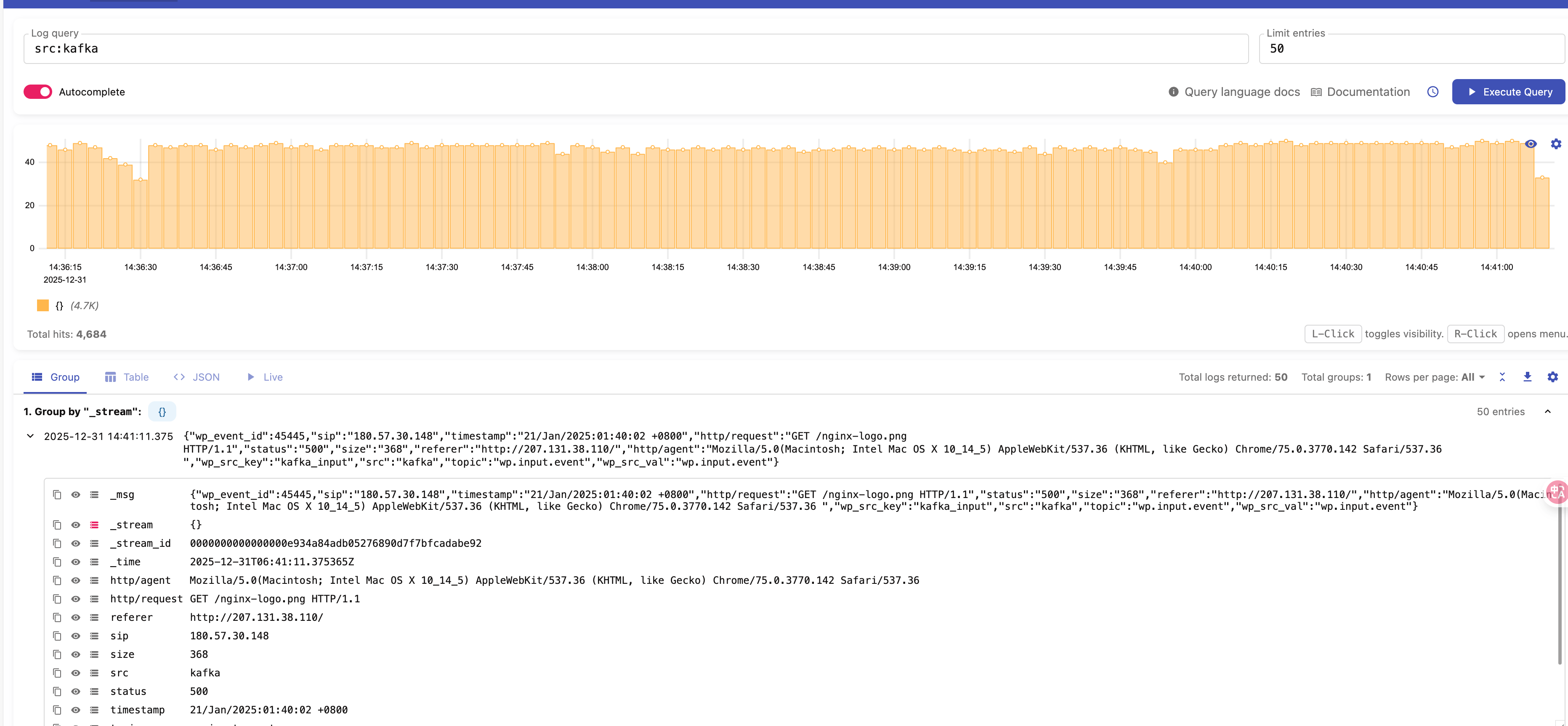

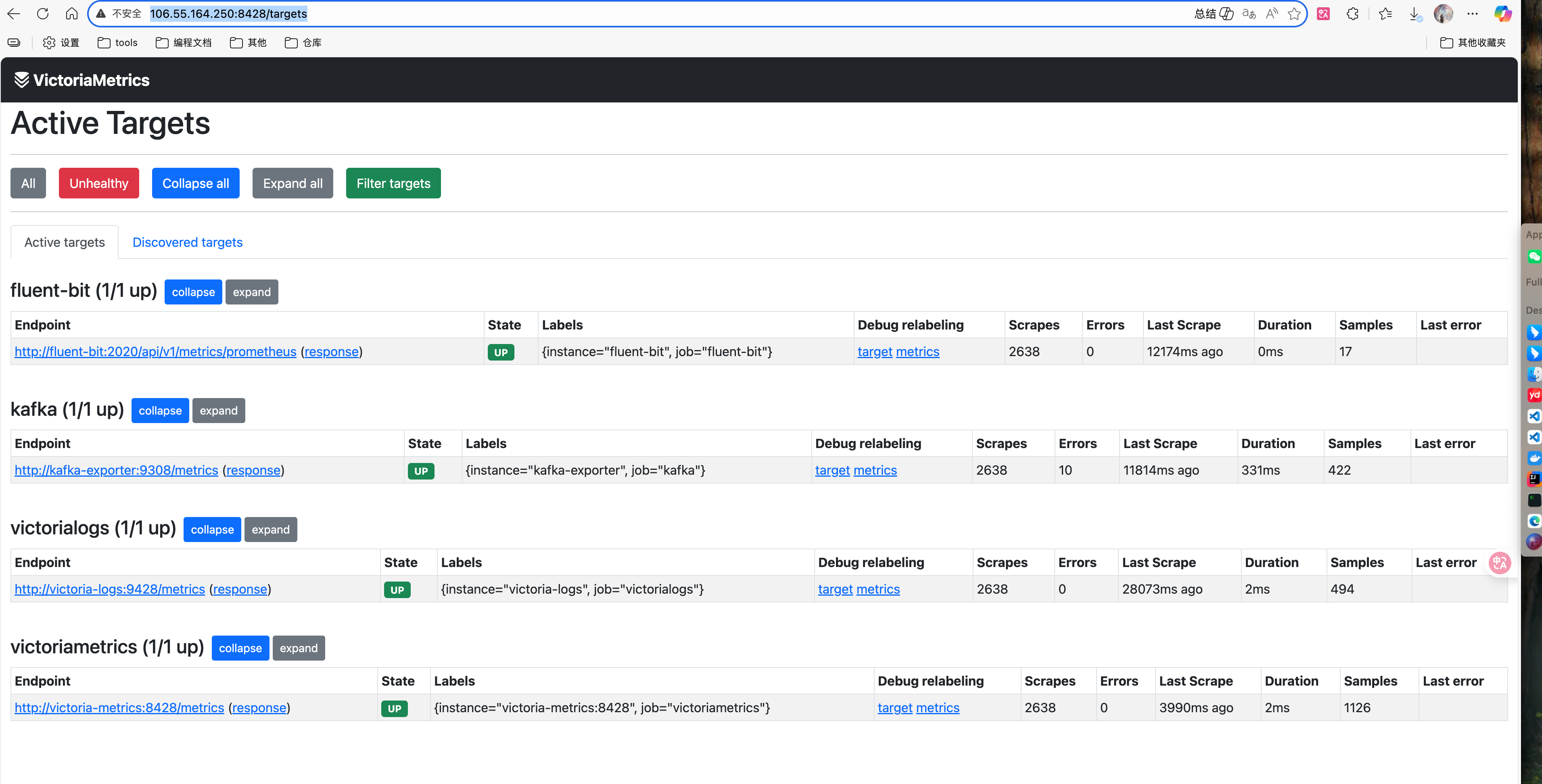

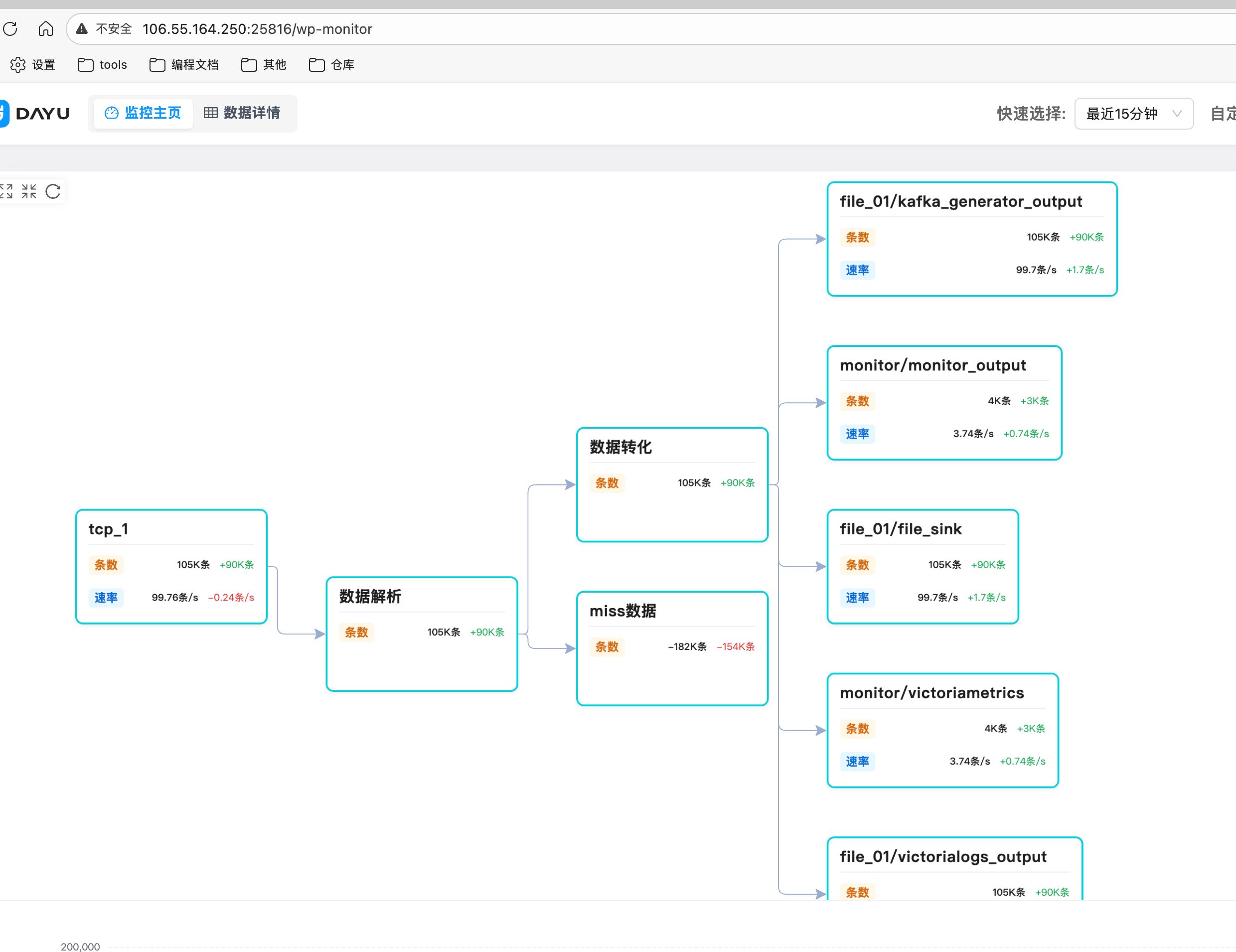

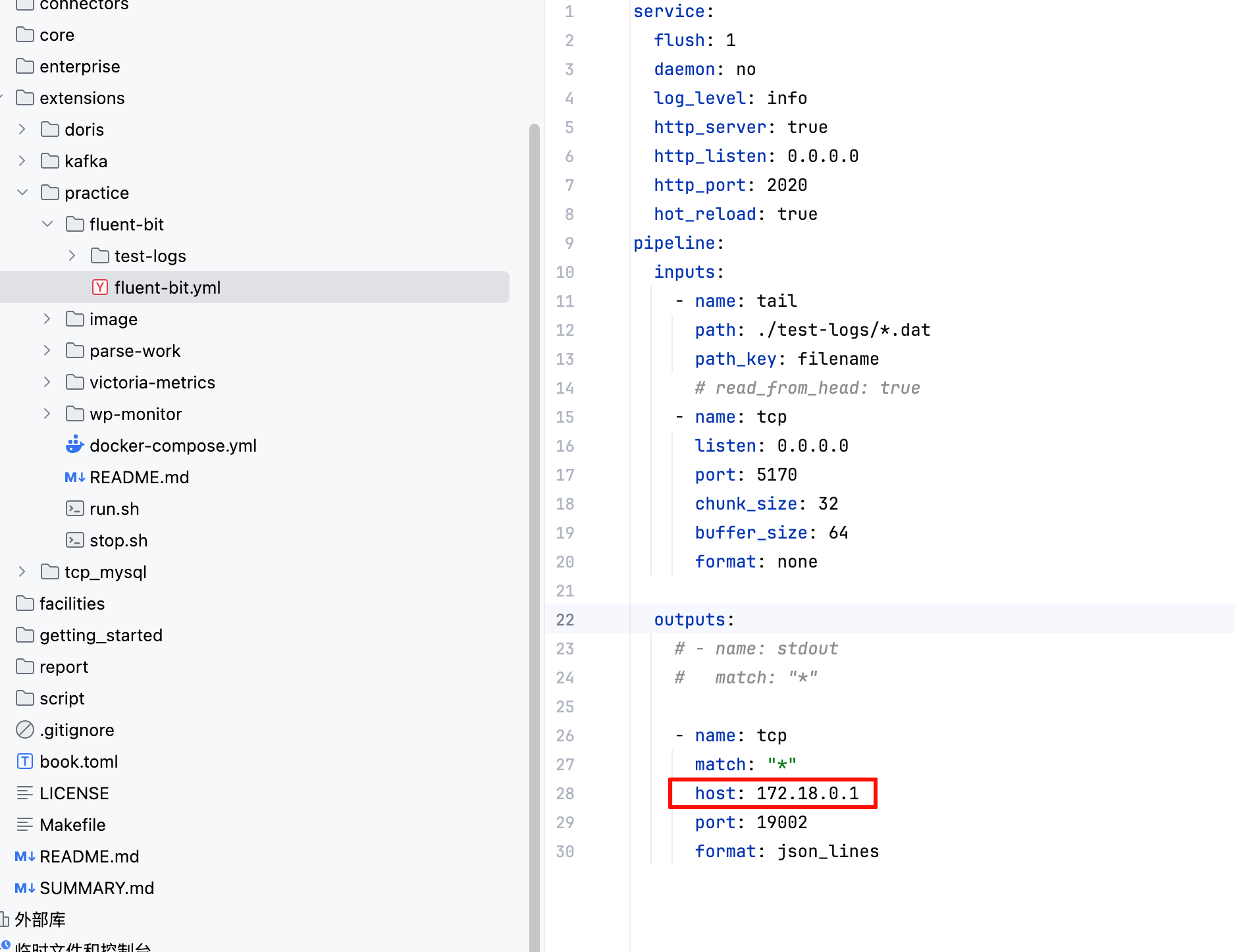

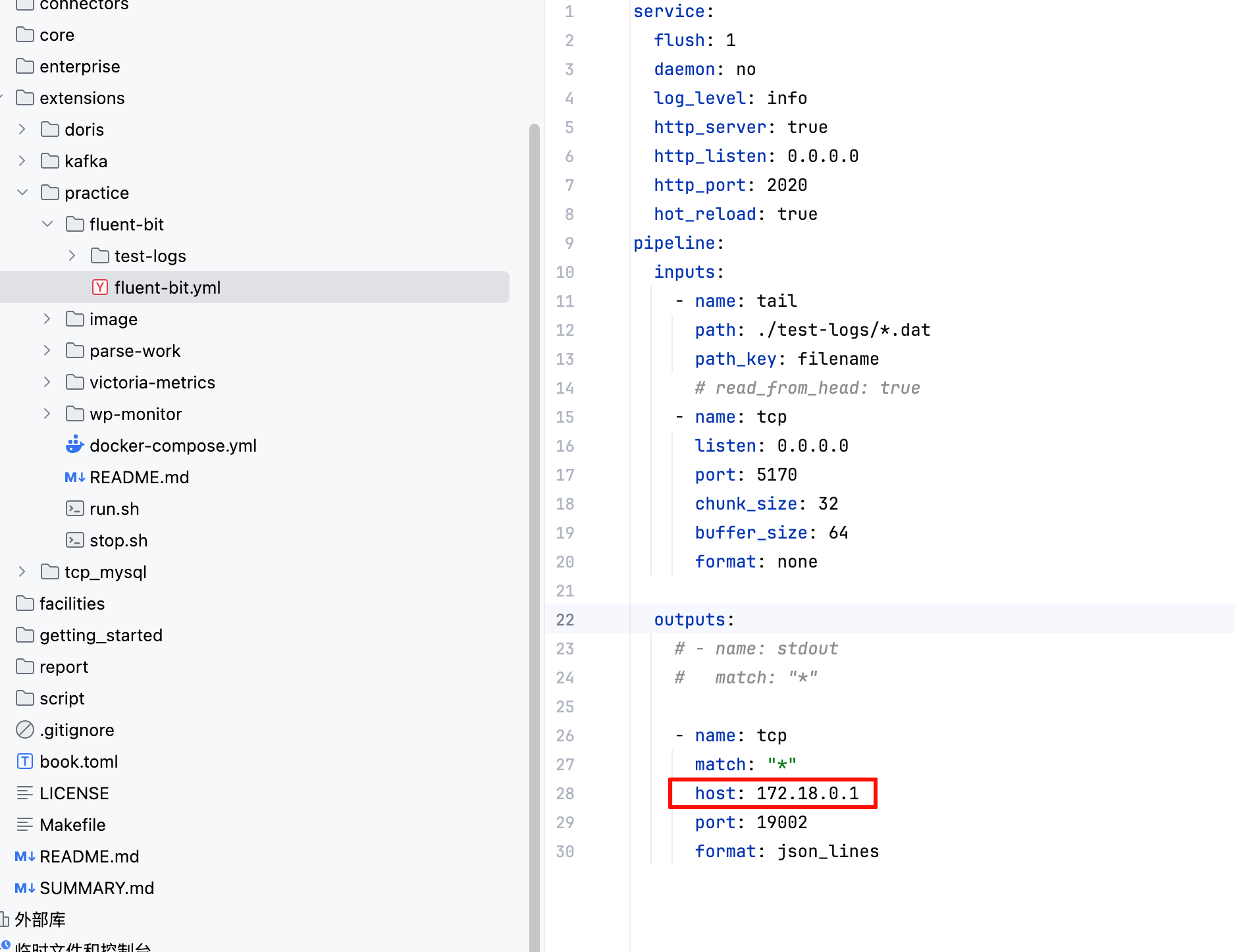

Practice - Real-World Multi-Source Monitoring

Overview